Data Center Flexibility: Chapter 10 + 11

Chapter 10: Measuring success and tracking progress; Chapter 11: From pilots to progress: a path forward

Chapter 10: Measuring success and tracking progress

As flexible data centers begin to deploy, big questions still need answering. The most important of which is “can data center flexibility provide value at scale and resolve the key risks and challenges identified?” The long‑term viability of flexibility depends on execution, where it improves reliability, accelerates interconnection, and lowers total system costs while enabling continued digital growth. Specifically, when it comes to reliability, accelerating interconnection lowers total system costs and reduces emissions while supporting continued digital growth. Flexibility only becomes a trusted planning and regulatory tool when outcomes are visible, verifiable, and comparable across regions and programs.

This chapter looks at what “success” means for data center flexibility - section 10.1. It also looks at how success can be measured, governed, and monitored over the short, medium, and long term- sections 10.2 to 10.4.

10.1 What does success look like?

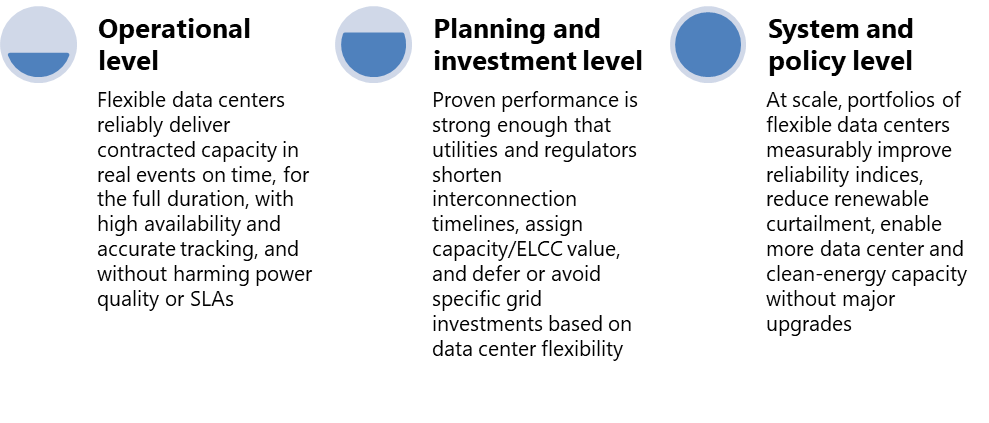

In this context, success for data center flexibility is more than “the event didn’t fail.” It is defined in three dimensions: operational, planning and investment, and system and policy dimensions-Figure 42.

Operational level: Does it deliver reliably in real events? Operationally, success meant flexible data centers consistently deliver what they commit to. This includes the contracted MW/MWh, within required response times/durations, with high availability and accurate tracking of dispatch profiles. This, in turn, without degrading local power quality or SLA levels.

Planning and investment level: Does it accelerate the interconnection timeline and infrastructure investment? Planning and investment success means that flexibility is reliable and predictable enough for utilities, system operators, and data center operators to incorporate it into system planning and infrastructure investment decisions. In practice, this means that verified performance leads utilities, ISOs, and regulators to shorten interconnection timelines for flexible facilities, credit data center flexibility with capacity or ELCC value, and defer or avoid specific transmission, distribution, and generation investments.

System and policy level: Does it improve system reliability, growth, decarbonization, and siting outcomes at scale? Success at the system and policy level is defined by broader outcomes. They include improved reliability and resilience, reaching decarbonization goals, and the ability to accommodate more digital load in constrained grids, lower burden for other ratepayers, and strong support from the public, operators, and regulators.

10.2 Potential metrics or KPIs

An effective measurement/KPI must operate within three dimensions of success, aligned to the short, medium, and long-term. These layers mirror the deployment pathway outlined in this report. From FOAK pilots to scaled portfolios, and, ultimately, standard practice across data center fleets and utility tariffs. These scorecards translate objectives into concrete measures, including curtailment performance and tracking accuracy in the short term, interconnection timelines and accredited capacity value in the medium term, and system reliability, decarbonization outcomes, and policy uptake in the long term.

10.2.1 Operational performance - Short term metrics

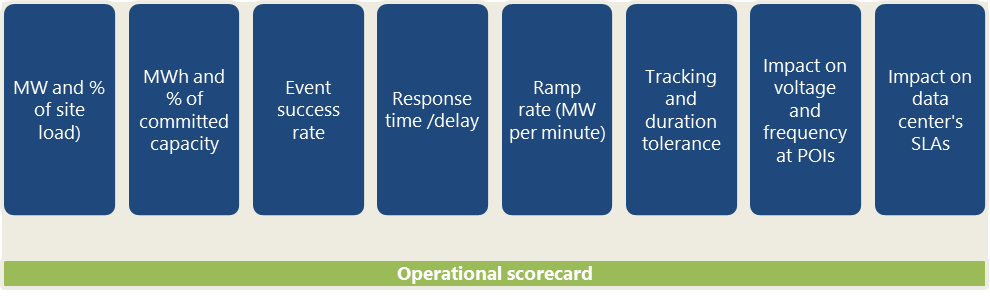

These metrics should be derived from standardized telemetry and measurement and verification methods tied directly to defined flexibility products and operating envelopes. For operators, the goal is to demonstrate that flexible operation is proven, auditable, and dependable under real system conditions. This includes during periods of stress, not only in controlled demonstrations.

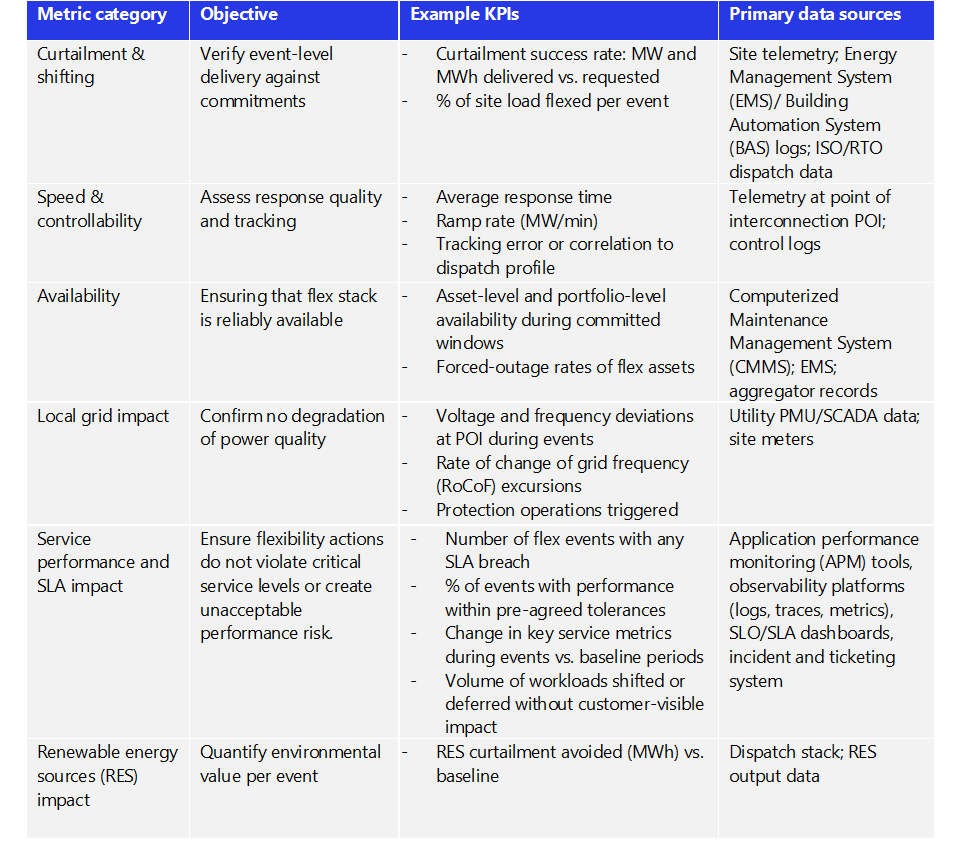

This scorecard can be built on established international flexibility programs such as the UK and EU, adapted to U.S. market design, data-center-centric operation, and local grid conditions-Table 26.

In short, the key metrics focus on event-level performance. For utilities and system operators, they include curtailment success rates, response speed, ramping accuracy, asset availability, and observed impact on voltage and frequency at the point of interconnection. For data center operators, the most important metric is service performance and SLA impact.

10.2.2 Planning and investment level - Medium-term metrics

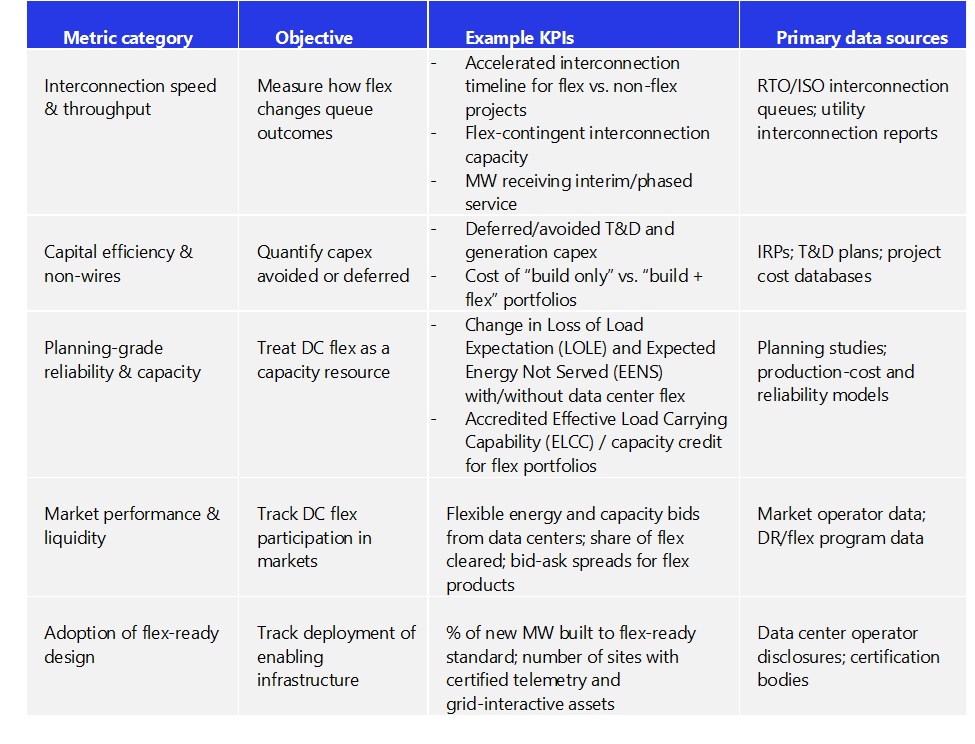

Over time, flexibility will be judged by whether it changes how grids are planned, financed, and interconnected. Metrics at this layer should align with integrated resource plans, interconnection reform efforts, and state and federal planning processes.

Key indicators include reductions in interconnection timelines, deferred or avoided transmission and distribution investments, verified capacity contributions such as ELCC, and the adoption rate of flex-ready designs and enabling technologies across new and retrofitted facilities. Together, these measures indicate whether flexibility is being embedded into planning and capital decisions or remains a last-resort operational tool-Table 27.

10.2.3 System impact and policy level - Long-term metrics

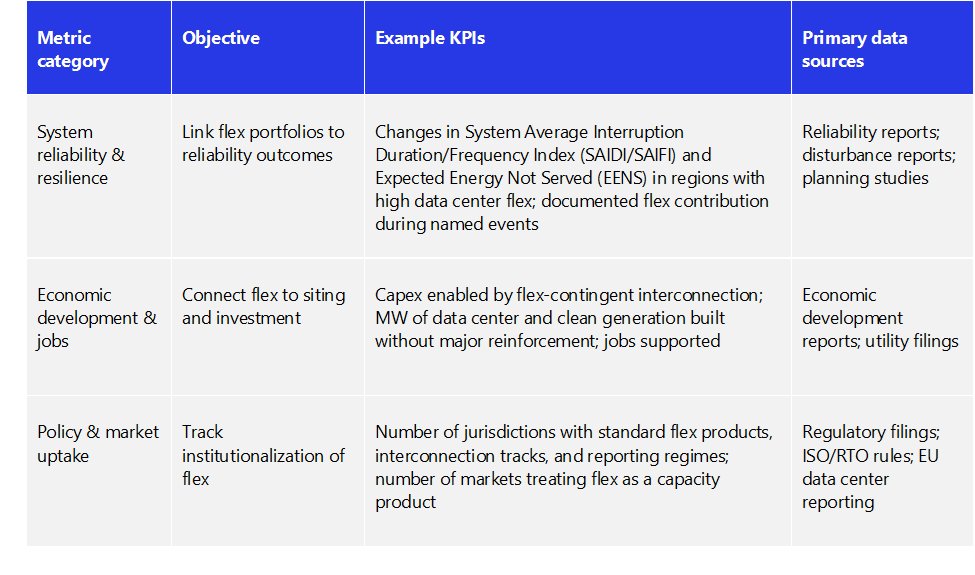

Measurement at this level focuses on changes in resilience and reliability indices, additional MW of data center and clean-energy capacity enabled without major system upgrades, and the adoption of formal products, tariffs, interconnection pathways, and reporting regimes that explicitly recognize data center flexibility. When these outcomes are visible in system data and public reporting, flexibility has become a structural feature of the power system rather than an experiment at its edge.

At scale, flexibility’s value is measured through system-level outcomes: improved resilience and reliability, and lower total system costs through more efficient use of existing infrastructure and capital.

Representative metrics include contributions to grid reliability, ratepayer savings from avoided infrastructure buildout, and the number of jurisdictions adopting standardized flexibility products, interconnection tracks, and telemetry frameworks.

These indicators connect individual project decisions to national policy objectives, including clean-power targets, community acceptance of large loads, and industrial strategy for AI and digital infrastructure-Table 28.

10.3 Governance and transparency

These metrics only build trust when they are verified across regions and publicly visible. Experience from established international DER/Flexibility in the EU, UK, and others points to three governance requirements:

Independent testing and accreditation of performance and compliance

Regular public reporting of capacity, utilization, costs, and emissions

Standardized baselines and accounting methods to ensure comparability

A credible governance layer rests on three elements. First, independent testing and accreditation of both technical capability and ongoing performance. Flexibility claims, then, are validated beyond self-reporting. Second, regular public reporting of enrolled capacity, utilization, avoided costs, and reliability outcomes. Benefits and trade-offs then become visible to regulators, communities, and investors. Thirdly, it includes standardized baselines, telemetry, and accounting methods. Results can become comparable across utilities, ISOs, and jurisdictions and can be aggregated without distortion.

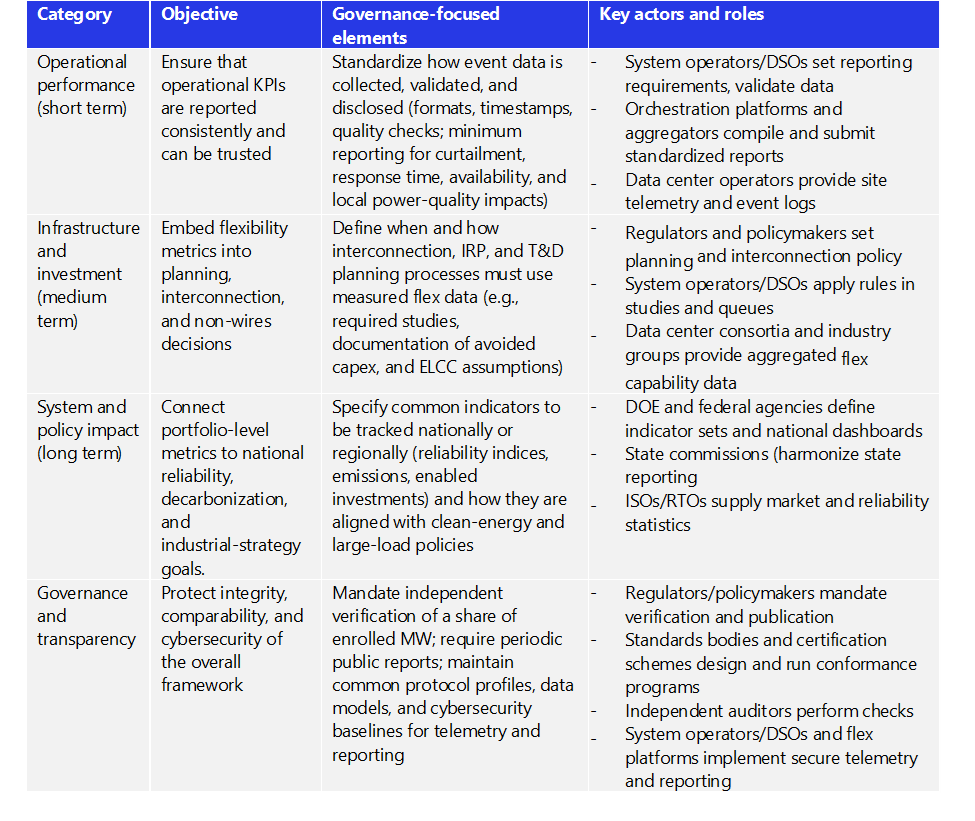

In the U.S., U.S. Department of Energy (DOE), Federal Energy Regulatory Commission, RTOs, and state commissions are the natural conveners for this layer, building on existing transparency regimes in capacity markets, resource adequacy, transmission planning, and demand response. Over time, a national “data center- centric- flexible load” database could publish standardized statistics on enrolled MW, activations, avoided system costs, emissions impacts, and performance factors. Table 29 summarizes governance approach across three dimensions: operational performance, infrastructure and investment outcomes, system and policy impact. This gives utilities, operators, and regulators a shared framework on what must be measured, who owns each dataset, and how often it should be reported. This creates a transparent chain from site-level telemetry to system and national-level decision making.

10.4 Shared accountability

Because flexibility depends on coordinated action across the ecosystem, accountability for outcomes must also be shared.

Utilities and system operators should use measured performance to calibrate accreditation rules, large load tariffs, and non-wires alternatives, raising/lowering accredited capacity and compensation based on delivery. This was demonstrated in the Irish DS3 performance scalars and other international case studies.-Chapter 9.

Data center operators can use verified results to support siting and permitting, negotiate interconnection and tariff terms. In addition, they can justify investments in grid-interactive designs and telemetry. At the same time, they can commit to publishing annual flexibility transparency reports aligned with the scorecards in this chapter.

Regulators can adjust incentives, cost-recovery mechanisms, and consumer protections. This is based on observed reliability, cost, and emissions outcomes instead of static assumptions.

Technology providers and aggregators should treat measurement, cyber‑secure telemetry, and open data formats as core product requirements rather than after‑the‑fact reporting features.

Turning measurement into progress

Metrics are not an end state; they are the feedback loop that enables learning and scale. Consistent reporting allows successful models to expand across regions while quickly identifying approaches that need redesign.

The near-term opportunity for the U.S. is to do what leading international markets already do. This includes: publishing regular, national-level reports on flexible capacity, activations, avoided costs, explicitly including data centers. Over time, these datasets will support more advanced tools, from capacity accreditation and insurance products to integrated planning assumptions and public dashboards.

When all parties operate from a common scorecard, flexibility shifts from a promise to a contractible reality. Flexibility has demonstrated technical potential. The next step is to demonstrate impact systematically, transparently, and at scale.

11. From pilots to progress: a path forward

This framework has shown that data center flexibility is technically feasible, economically compelling, and already emerging in pilots and early programs across the U.S. and EU, with growing support from providers, communities, and financiers. The remaining challenge is execution: how to translate this potential into a coordinated and de-risked agenda that matches the speed and scale of AI-driven data center growth. This chapter synthesizes the insights from chapters 1 through 10 and highlights the actions they imply. It translates this framework into a structured path forward for utilities and grid operators, data center developers and operators, regulators, technology providers, communities, and financiers.

11.1 Key takeaways through chapters 1 to 10

11.1.1 Chapter 1: Mismatch between digital demand and power delivery

Chapter 1 identified the core challenge. Data center demand is projected to triple by 2028. Yet, transmission, generation, and traditional interconnection processes can take up to 7-10 years to deliver new capacity. This timing mismatch is clearest in primary data center markets, like Northern Virginia, Chicago, Arizona, and some major EU markets. Specifically, where clustered data center growth is colliding with constrained grid headroom and long permitting horizons.

The traditional approach of building new transmission lines and generation plants is increasingly misaligned with the current ecosystem. For instance, when it comes to the pace of data center growth, their cost, and the rising opposition from local communities, there are concerns.

Meanwhile, detailed load and system analyses show many systems are constrained for only a limited number of peak hours each year. This reveals significant latent capacity that can be unlocked if peaks are actively managed through targeted flexibility.

Chapter 1 reframed flexibility not as an option. Instead, it positioned it as a system-level tool for closing the timing gap between digital infrastructure growth and grid expansion. Three implications follow:

Developers and data center operators should treat grid timing, regional constraints, and flex‑contingent interconnection options as primary design and siting criteria.

Utilities and grid operators should recognize a flex‑contingent interconnection framework and provisional interconnection pathways. Ones that let large loads connect earlier in exchange for credible flexibility commitments during a defined set of high-stress hours.

Regulators and policymakers in constrained regions should prioritize targeted pilots and rulemakings that test flexible large-load constructs. They use these regions as laboratories for scalable, system-wide solutions.

11.1.2 Chapter 2: Engineer flexibility into the four pillars

Chapter 2 introduced the four pillars: compute, energy resources, infrastructure, and contractual flexibility. Together, these four pillars define standardized flexibility design and operating envelopes. They scale across facilities and portfolios, giving utilities, operators, regulators, and vendors a common definition of what “flex-ready” means in practice. Three implications stand out:

Adopt flex‑ready design baselines: Flexibility needs to be designed from the start. New data centers should be built with a controllable IT system, orchestration platform, and on-site flex resources, including BESS, interactive UPS, gensets, or advanced cooling systems.

Integrate on‑site assets into grid support: On‑site batteries, generators, and on-site resources should be sized, controlled, and contracted. Not only for backup, but also for structured grid support under clear contractual agreements and operating rules.

Design contracts and tariffs tailored to a data center’s operating characteristics: Flex-contingent-interconnection agreements, tariffs, and customer SLAs should explicitly define flexibility windows, response times, load priorities, and compensation mechanisms. This is instead of assuming a binary “on or off” participation model like emergency response.

11.1.3 Chapter 3: Coordinate the six-tier ecosystem

Chapter 3 mapped the six-tier ecosystem. This includes: 1) grid operators and utilities, 2) aggregators/orchestration platforms, 3) data center operators and developers, 4) on‑site assets, 5) standards and community bodies, and 6) financial stakeholders. It also highlighted how current fragmentation and trust gaps limit scale.

The chapter emphasizes that technology alone is not enough. Progress depends on clarifying roles, interfaces, and incentives across all tiers so that data center flexibility can be planned, procured, and relied on as a system resource. Several practical implications follow:

Grid operators and utilities (Tier 1) should define clear, data center-centric flexibility products, interconnection options, and reliability requirements. This includes baseline and telemetry expectations.

Aggregators and orchestration platforms (Tier 2) should build data center-centric portfolios that coordinate four pillars of flexibility, including compute, cooling, and resource and contractual flex across sites. This uses standardized signals and settlement methods that align with market rules and SLAs.

Data center operators and developers (Tier 3) should commit to transparent flexibility envelopes, share necessary operational data under robust governance, and align internal risk and decision frameworks with external program requirements.

On-site asset providers (Tier 4) develop and deliver grid interactive technologies, such as UPS/battery systems, thermal storage, and backup generation with certified performance, telemetry, and cybersecurity profiles that make them “plug in” components of flex portfolios.

Standards bodies and financial actors (Tiers 5 and 6) should anchor this ecosystem in common technical profiles, certification schemes, and due diligence expectations, making flexibility claims investable and auditable.

11.1.4 Chapter 4: Quantify value and impact of flexibility

Chapter 4 quantified how data center flexibility translates into tangible system, commercial, and community value. It showed that flexibility can reduce substantial system costs, defer T&D upgrades, and generate tremendous environmental and economic benefits.

Even a few percentages of flexibility, e.g. 2.5%-5%, can free up GWs of headroom in a constrained system. This reduces the need for costly and time-consuming transmission upgrades and new coal or fossil generation buildout.

For data center operators and developers, this enables earlier interconnection and portfolio-level optimization. By committing to flex, data centers pull forward revenue while gaining regulatory and public support by actively stabilizing the grid rather than passive consumption.

Communities, in turn, benefit from earlier jobs, tax base expansion, and infrastructure investment without bearing the full cost and environmental burden of assets built solely to serve large-load-firm demand.

Three actions follow from this analysis:

Embed flexibility value into planning: Utilities and regulators should explicitly model avoided capacity, deferred transmission and distribution investments, reduced curtailment, and improved hosting capacity when evaluating flex‑enabled data center projects versus inflexible alternatives.

Reflect value in tariffs and business cases: Large‑load tariffs, rate structures, and corporate investment decisions should reflect the system value of flexibility. This is through discounts, credits, or performance‑based compensation, rather than treating flexible design as an unpriced externality.

Transparent information to gain public support: Communities and policymakers should have access to clear, quantitative narratives. This shows how flex‑enabled data centers can bring forward tax revenue, jobs, and infrastructure upgrades. At the same time, it reduces the need for new lines or on‑site fossil plants.

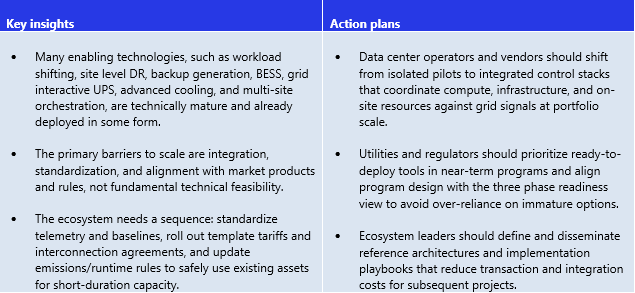

11.1.5 Chapter 5: Move from technology readiness to portfolio deployment

Chapter 5 evaluated the technology readiness and adoption levels for key flexibility tools. It also mapped them into three phases: ready‑to‑deploy (next 18 months), emerging (2027–2028), and development pipeline (2029–2030).

Chapter 5 argued that most core technologies required for data center flexibility are maturing. They haven’t been deployed, though, as a cohesive data center specific system at scale.

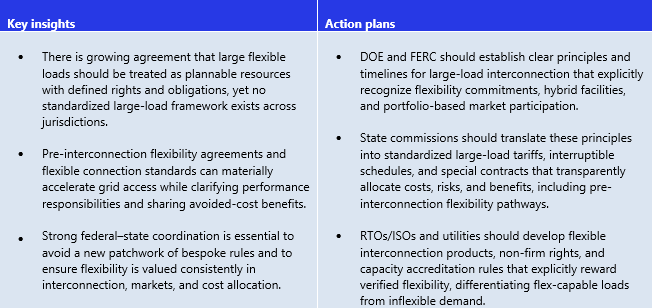

11.1.6 Chapter 6: Align regulation and policy with flexible large loads

Chapter 6 looked at how federal, regional, and state regulatory frameworks are adapting to rapid growth in different areas. This includes: large data center loads, spanning DOE initiatives, emerging FERC large-load interconnection reforms, RTO/ISO rulemaking, and state commission actions. The chapter shows that regulators are beginning to recognize large, flexible loads as system assets rather than passive demand, but there is a lack of a consistent, nationwide framework.

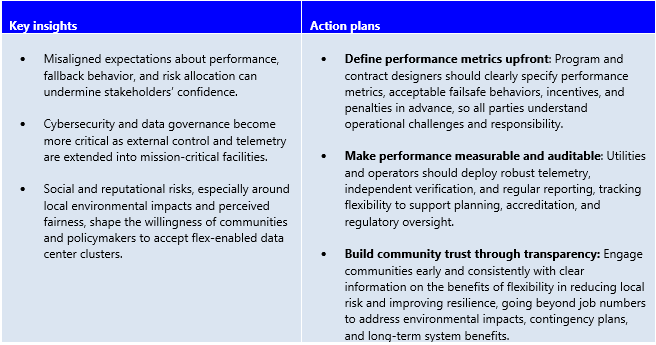

11.1.7 Chapter 7: Manage risk and build trust

Chapter 7 discussed the risks associated with data center flexibility into six categories. They include: 1) system reliability, 2) regulatory and modeling risk, 3) social, political, and reputational risk, 4) cybersecurity and data risk, 5) counterparty and credit risk, and 6) facility-level operational risk. The chapter highlighted that trust is the binding constraint to scaling flexibility from pilots to implementation at scale. Data center flexibility will only be treated as a grid resource if utilities, operators, regulators, and communities can rely on how it performs under both normal and stressed system conditions.

11.1.8 Chapter 8: Build standards and interoperability framework

Chapter 8 set out the standards, communication, data model, API, certification, and cybersecurity foundations needed to scale data center flexibility across vendors, utilities, and jurisdictions. It highlighted that there is still no mature, dedicated technical standard for data center flexibility. Instead, most current efforts adapt general demand response and DER standards that were not designed for hyperscale, always‑on digital infrastructure.

From this, the chapter drew three main implications:

Standards bodies and industry consortia should finalize data center-specific profiles and APIs, prioritizing practical, implementable specifications for telemetry, control, and MV so that flexibility can be integrated consistently into grid operations and the market.

Certification bodies and labs should develop test protocols and certification schemes that validate end-to-end flexibility performance, not just component capabilities, giving regulators, utilities, and financiers confidence in what data centers can deliver.

Operators and vendors should align internal architectures to these standards. To enable multi-market participation without bespoke engineering each time and turn interoperability from a barrier into a baseline capability.

11.1.9 Chapter 9: Adapt international lessons to the U.S. power system

Chapter 9 benchmarked leading international flexibility programs. This includes: the EU’s initiatives, Great Britain’s flexible connection frameworks, Ireland’s flex-contingent interconnection policies, China’s spatial flexibility strategies, and the Netherlands’ congestion platforms (GOPACS). Most of these programs were originally designed and matured over the past decade. The intention is for large C&I customers, storage, and distributed energy resources. Data centers, then, would be participating indirectly via aggregators rather than as a distinct asset class. Even so, these international programs still provide robust implementation blueprints. Whether it be for rule‑setting, performance‑based incentives, and integration of flexibility and demand response into planning and operations.

Ireland’s Large Energy Users Connection Policy stands out as the closest current analogue for data‑center‑specific flexibility. Its flex‑contingent interconnection model explicitly requires large data centers to combine different variables. For instance, dispatchable on-site capacity, demand flexibility, and renewable sourcing. This is in exchange for non-firm but accelerated grid access, with clear performance obligations and transparency requirements for system operators. This makes Ireland’s framework a particularly relevant reference point for U.S. regulators and system operators. This is when it comes to tailoring flex-contingent interconnection policies to local grid conditions while directly targeting data center growth.

From this, three priorities emerge for the U.S. power system:

Adapt proven design elements: U.S. regulators and operators should adapt proven design elements. Such as setting a national framework while tailoring local grid adoption. As well as designing flexible connection agreements, performance scalars, and congestion markets to local conditions, rather than starting from scratch.

Avoid fragmentation by design: Multi-state, ISOs/RTOs, FERC, and regional bodies should work to avoid unnecessary divergence in product definitions and verification rules, especially where data center fleets span multiple RTOs/ISOs.

Use international experience to accelerate learning: Researchers and practitioners should continue structured learning from international business cases, focusing on governance, equity, and public acceptance as much as technical design.

11.1.10 Chapter 10: Track progress and accountability

Chapter 10 defined “success across three dimensions. They were: operational, planning and investment, and system policy. It then proposed success metrics, governance, and accountability mechanisms. Turning those concepts into a shared scorecard for the ecosystem.

Adopt a shared scorecard: Utilities, operators, regulators, and data center leaders should agree on a core set of metrics. For instance, including flexible capacity accredited, event performance, avoided T&D and generation capex, interconnection timelines, and system-level reliability and emissions impacts. Reporting these consistently at the portfolio and system levels.

Link incentives and oversight to results: Compensation structures, regulatory approvals, and public reporting should be tied to these metrics, ensuring that flexibility is rewarded for real performance and not just enrollment.

Use metrics to refine programs: Over time, tracking outcomes to make refinements. Whether it be for product design, accreditation rules, planning assumptions, or non-wires decisions. This then turns flexibility into a feedback-driven, continuously improving part of resource planning.

Build governance around independent verification: Require standardized telemetry, independent/third-party testing/accreditation, and regular public reporting (via a national data‑center‑flex database). Results can then be tracked and trusted across utilities, RTOs/ISOs, and states.

11.2 One-page action map: who does what to scale data center flexibility

This action map clarifies who must act, on what, and when to move data center flexibility from pilots to standard practice. Each stakeholder group has a distinct role, but progress depends on coordinated execution across the ecosystem-Figure 43.

11.2.1 Utilities and grid operators (RTOs/ISOs)

Integrate flexibility into planning, operations, and interconnection

Define data-center-appropriate flexibility products, operating envelopes, and fallback rules.

Create flex-contingent and provisional interconnection pathways tied to verified performance.

Embed accredited data center flexibility into IRPs, transmission planning, and RA frameworks.

Publish locational constraint data, flexibility needs, and performance results transparently.

Near-term priorities: Standardize telemetry and baselines, launch flex-contingent interconnection tracks, and accredit flexibility conservatively but explicitly in planning models.

11.2.2 Data center operators and developers

Standardize flexibility in designing and operating

Adopt flex-ready design baselines across compute, cooling, UPS/BESS, and controls

Define and commit to clear flexibility envelopes that protect SLAs and reliability

Provide auditable telemetry and participate in independent testing and verification

Use verified performance to support permitting, siting, and community engagement

Near-term priorities: Integrate grid-interactive controls into new builds, formalize flexibility tiers in SLAs, and move from site-level pilots to portfolio commitments.

11.2.3 Aggregators and orchestration platform providers

Translate grid needs into data-center-safe, portfolio-scale execution

Build data-center-centric orchestration that coordinates compute, cooling, and on-site assets

Implement interoperable APIs, telemetry, and MV aligned with market and SLA requirements

Align back-to-back contracts so risks and penalties are consistent across counterparties

Support performance tracking, settlement, and reporting at portfolio scale

Near-term priorities: Deliver certified, interoperable control stacks that utilities can rely on without bespoke integration.

11.2.4 Regulators and policymakers (state, federal)

Set guardrails that enable speed without sacrificing reliability or equity

Establish principles for flex-enabled large-load interconnection and market participation

Translate federal guidance into tariffs, special contracts, and consumer protections

Require transparency on avoided costs, bill impacts, and performance outcomes

Align incentives with verified delivery rather than announced capability

Near-term priorities: Clarify large-load interconnection rules, enable flex-contingent pathways, and tie approvals to measurable outcomes.

11.2.5 Standard bodies, certification entities, and community stakeholders

Create legitimacy, interoperability, and social license

Finalize data-center-relevant profiles for telemetry, control, and cybersecurity standards

Develop certification and testing regimes that validate end-to-end flexibility performance

Embed transparency, fairness, and community impact considerations into program design

Maintain open forums for technical coordination and dispute resolution

Near-term priorities: Move from draft standards to implementable profiles and launch independent certification pathways.

11.2.6 Financial stakeholders, insurers, and auditors

Underwrite performance and discipline risk

Develop insurance, guarantees, and credit structures linked to verified flexibility delivery

Use standardized metrics to reduce price risk and avoid cliff-edge exposures

Support performance-linked bonds, LOCs, and insurance-backed guarantees

Require auditable data and governance as a condition for capital

Near-term priorities: Refine underwriting based on early loss and performance data and align financial products with accredited flexibility.

From framework to practice

No single player can scale data center flexibility on its own. Utilities need credible, verifiable performance. Data center operators need predictable rules and clearly bounded operational risk. Regulators need evidence that flexibility delivers real system and public benefits. Financiers need auditable risk and bankable structures. When these elements align, data center flexibility shifts from a bespoke pilot to a plannable and financeable system resource.

This report is designed to be actionable, translating concepts, frameworks, telemetry, and governance requirements into practical guidance that utilities, system operators, and data center operators can integrate into tariffs, interconnection procedures, and commercial agreements, shortening the path from concept to execution.

The foundation is already in place. What is needed now is leadership across utilities, data center operators, regulators, technology providers, and financiers to adopt shared standards, metrics, and governance and to move decisively from pilots to scale. The question is no longer whether flexibility will shape the next decade of digital infrastructure; it is who will lead in making it reliable, bankable, and real.