Data Center Flexibility: Chapter 3

Stakeholder Ecosystem Analysis

Rolling out data center flexibility to meet fast-growing demand has run into a problem: stakeholders are used to working 1:1 when there is an interface. Traditional market structures, which were largely designed for predictable demand patterns and supply-side solutions, were not built for the complexity, scale, and speed of today’s data center grid integrations. This analysis examines the current market inefficiencies, presents a six-tier coordination model for overcoming these barriers, and identifies the mechanisms needed to align stakeholder incentives at an unprecedented scale.

Success will require more than technology: real progress demands the transformation of the ecosystem. That means building trust, improving information sharing, clarifying regulations, reducing risks, and creating incentives that truly bring stakeholders together. Ultimately, the impact reaches far beyond one project; it raises the broader question of where America’s digital infrastructure can keep up with growth while ensuring reliable, climate-responsible power.

3.1 Current market failures

3.1.1 Fragmented regulatory frameworks

Despite clear momentum, the path to large-scale flexibility remains patchy. Regulatory authority is split across multiple jurisdictions, timelines rarely align, and incentives often work at cross-purposes.

Over the last decade, regulators have started to recognize flexible demand as a real grid resource, but the frameworks are still incomplete. At the federal level, FERC Orders 841 and 2222 opened wholesale markets to aggregate flexible resources, such as storage, distributed generation, and responsive load, allowing them to participate in capacity, energy, and ancillary service markets.12 These frameworks opened access for non-traditional resources but left ambiguity about how large data centers fit within existing participation models through a mix of BTM assets, workload flexibility, and contractual flexibility. Multi-state portfolios must navigate overlapping jurisdictions that don’t yet recognize interstate benefits, creating legal and operational uncertainty across RTOs/ISOs and states.

FERC rulemaking and DOE “Speed to Power” initiative

Both FERC and the DOE are moving forward with significant grid reforms, driven by the sharp rise in power demand. The DOE has directed FERC to strengthen oversight and accelerate interconnection for loads above 20 MW.3 In its latest Advance Notice of Proposed Rulemaking (ANOPR), FERC is proposing to standardize large-load interconnection procedures and agreements across transmission providers, creating consistent timelines and requirements nationwide.4 Seeking for comment by late-November 2025, the agency is now drafting a formal proposed rule, with a target decision date of April 2026.5 If adopted, this would establish the first transparent national framework for large-load interconnection.

At the same time, DOE launched the “Speed to Power6” initiative in September 2025, aiming to resolve supply-side’s bottleneck. The initiative focused on accelerating the speed of large-scale grid infrastructure project development for both transmission and generation. The DOE is requesting stakeholders’ input on investment opportunities, project readiness, load growth, and infrastructure constraints. The goal is to best leverage the DOE’s funding and permit authority to balance supply and demand.

Taken together, these efforts signal a major shift in U.S. energy policy by standardizing the process and accelerating large-load grid connections. Yet one essential piece is still missing: a framework that defines how data center flexibility can participate, how it should be measured, and how its value should be recognized across markets. Until that structure exists, reforms will speed up connections but will not fully unlock the scale, reliability, and cost savings that flexibility can provide.

State-by-state and regional variation

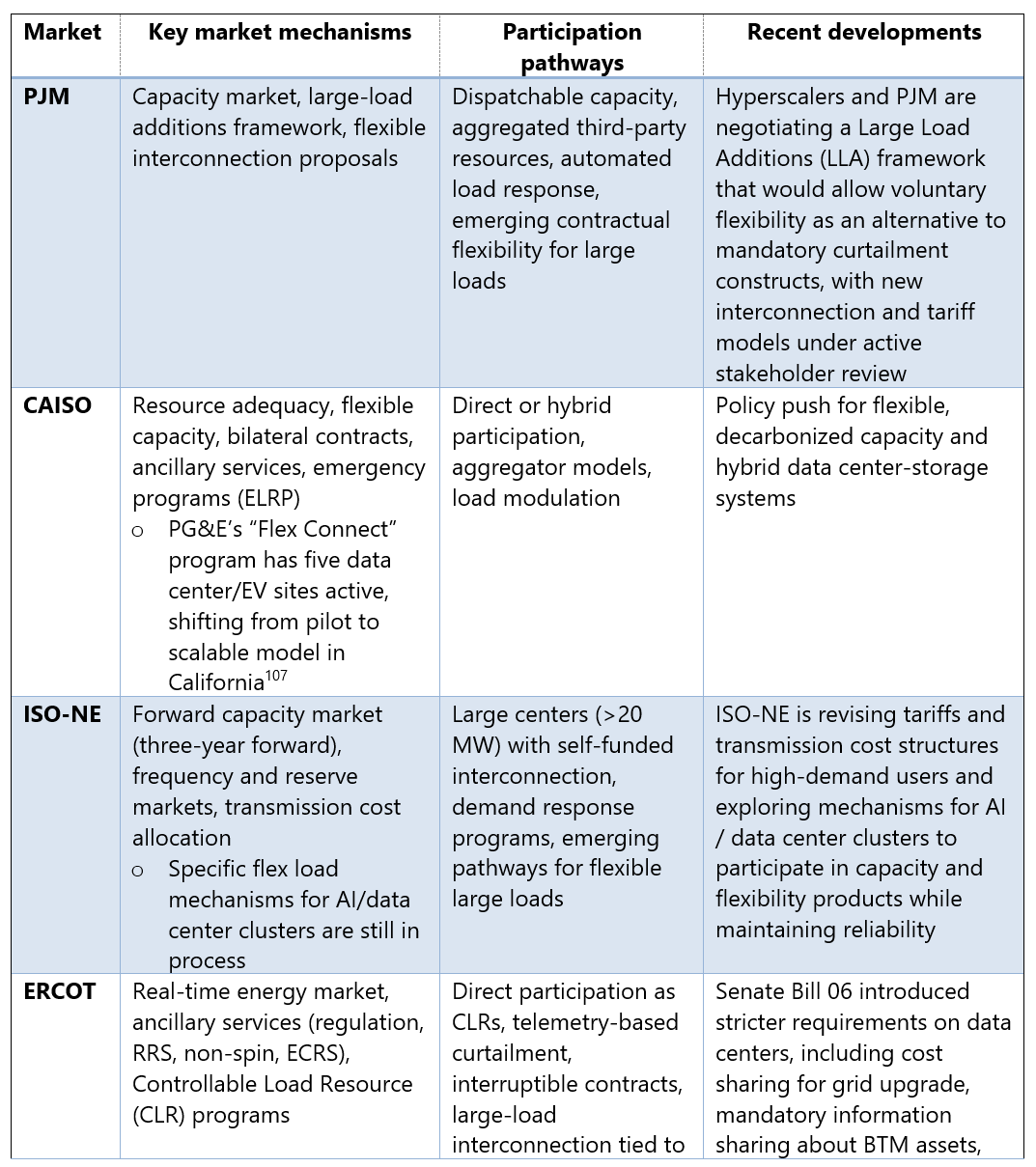

Until DOE and FERC finalize standardized rules for large flexible loads, the path forward remains fragmented across regions. Operators must navigate a different regulatory playbook for every site, shaped by RTOs, ISOs, and state authorities. For example, SPP’s 90-day High Impact Large Load (HILL) interconnection contrasts sharply with typical timelines that can exceed five years in other regions, creating regulatory arbitrage opportunities that can distort site selection.7

This variation extends to environmental regulations, permitting requirements, and market access rules that affect the economic viability of flexibility programs. This results in a patchwork of regulatory environments that dissuade typical approaches and increase the cost for developments on both data centers and utilities.

Some regions are testing new approaches to flexibility-Figure 19. PJM is piloting aggregated flexibility portfolios and performance-based participation models to speed up interconnections.8 In California, CAISO has expanded its emergency load reduction and hybrid resource programs to incorporate flexible demand.9 Meanwhile, ISO-NE, ERCOT, and NYISO are revising their capacity and tariff structures to encourage verified flexibility while ensuring reliability.1011

For flexibility to scale as a mainstream grid resource, it will require coordination from FERC, state commissions, utilities, and hyperscalers. This coordination must align incentives, verification methods, and market access across regions.

3.1.2 Siloed approaches and coordination caps

The biggest barrier to data center flexibility deployment is the lack of coordination between multiple stakeholders, who embrace different strategies.

Utility planning and rate design

Utilities generate revenue through rate-based capital investment and billing for demand and volumetric sales, not through managing flexible loads. As a result, traditional integrated resource plans still undervalue demand-side resources, and limited utilities have clear cost-recovery pathways for flexibility or performance-based services. The outcome: data centers are treated as fixed loads rather than dynamic grid assets.

Most large-load tariffs still reward consistency, not responsiveness.12 Time-of-use (TOU) volumetric and demand charges are aimed at cost recovery and peak reduction, not at paying for fast, verified multi-hour curtailment or locational flexibility. As there is a general absence of performance-based rates or non-operational thinking, data centers provide flexible services and receive the same compensation as those providing minimal response.

Technology vendor competition

Competition among technology providers has created a patchwork of proprietary systems that don’t talk to each other. Major technology providers, from facility management systems to orchestration platforms, have developed solutions optimized for competitive advantage rather than ecosystem coordination. The result is vendor lock-in and a proliferation of custom integrations. Each new flexibility project becomes a one-off design exercise instead of an application of standard architecture, slowing rollout and making it harder to scale across vendors and customer types.

Investment misalignment

Data centers invest heavily in backup generation, UPS, BESS, and advanced cooling to protect their own operations, but rarely plan those assets in coordination with the grid. Utilities pour capital into new transmission and capacity to serve projected peaks and build up the rate base on which they earn returns, often without clear mechanisms to factor data center flexibility into planning or procurement. Vendors chase markets for facility optimization or backup systems without explicitly designing for grid integration. Each party is spending money to manage risk in isolation. Very little of that investment is currently optimized for shared system benefit.13

No historical playbook and early signs of progress

Both sectors are operating without established frameworks for large-scale coordination. Past demand response programs mostly dealt with traditional commercial and industrial customers, not large loads with critical reliability. The issue is that data centers don’t have long track records of experience working with utilities to implement change, beyond interconnection, system improvement projects, and billing relationships.

Early joint efforts are starting to show a way through. Shared pilot programs, joint planning forums, and co-designed contract models are proving that it is possible to align incentives and build trust. Projects that combine AI-driven orchestration, transparent performance data, and clear event rules are beginning to demonstrate measurable grid benefits without undermining uptime. Similarly, utility-led efforts that use advanced forecasting and demand modeling to speed interconnections while maintaining reliability show that flexible loads can be incorporated into planning in a structured way. But overall, the industry is still operating without a long history or established playbook for hyperscale-utility collaboration.

3.1.3 Trust barriers between utilities and hyperscalers

Utilities and hyperscaler data center operators both share mutual skepticism when it comes to different operational cultures, risk tolerances, and business models. These trust barriers represent the most significant non-technical obstacle to systematic flexibility deployment.

SLA concerns and customers’ impacts

Hyperscalers are rightfully anxious when it comes to service level agreements (SLAs) with their customers. A single SLA miss can trigger customer churn, reputational damage, and long-tail revenue loss on the orders of millions of dollars per MW per month. Most hyperscalers are built for “five nines” (~5 minutes of downtime annually) or “four nines” (~52 minutes of downtime annually).14 Major cloud providers have invested billions in infrastructure designed to prevent service interruptions, making utility requests for load curtailment appear to conflict with core business objectives.

Flexibility complicates this further. Switching sources of power or a reduction in loads can create operational risks. Hyperscalers need certainty on event timing, maximum duration, compensation, and performance standards so that flexibility never jeopardizes uptime or customer trust.

Utility and grid operators’ reliability skepticism

For utilities and grid operators, skepticism runs the other way. Many have seen demand response underperform during critical events and are cautious about depending on new, untested types of flexible load. Historical experience in some regions shows DR portfolios delivering only a fraction of committed reductions during major stress. For instance, during Winter Storm Elliott in December 2022, PJM’s demand response resources were compensated for nearly $90 million for compliance, yet actual load reductions reached 26-32% of expected performance levels.15 Data centers, with their mission-critical nature, are seen as unlikely to curtail when it truly matters, especially in programs without strict rules.

Information gaps and language differences

Data center operators treat operational details as competitive secrets; utilities lack visibility into real flexibility potential. Misaligned terminology, divergent risk frameworks, and mismatched planning timelines widen the divide. Programs designed without mutual understanding often impose requirements that don’t fit operational realities.

Cultural friction

Cultural differences might be hard to fix. Technology giants, such as Amazon, Google, and Microsoft, move fast, favoring iterative design, flexible contracts, and performance-based incentives, while utilities operate within regulatory frameworks that prioritize approval processes, standardized terms, and predictable cost recovery. The friction isn’t about shared goals; both prioritize uptime and reliability, but about how risk, financing, and accountability are distributed.

3.2 The six-tier ecosystem coordination model

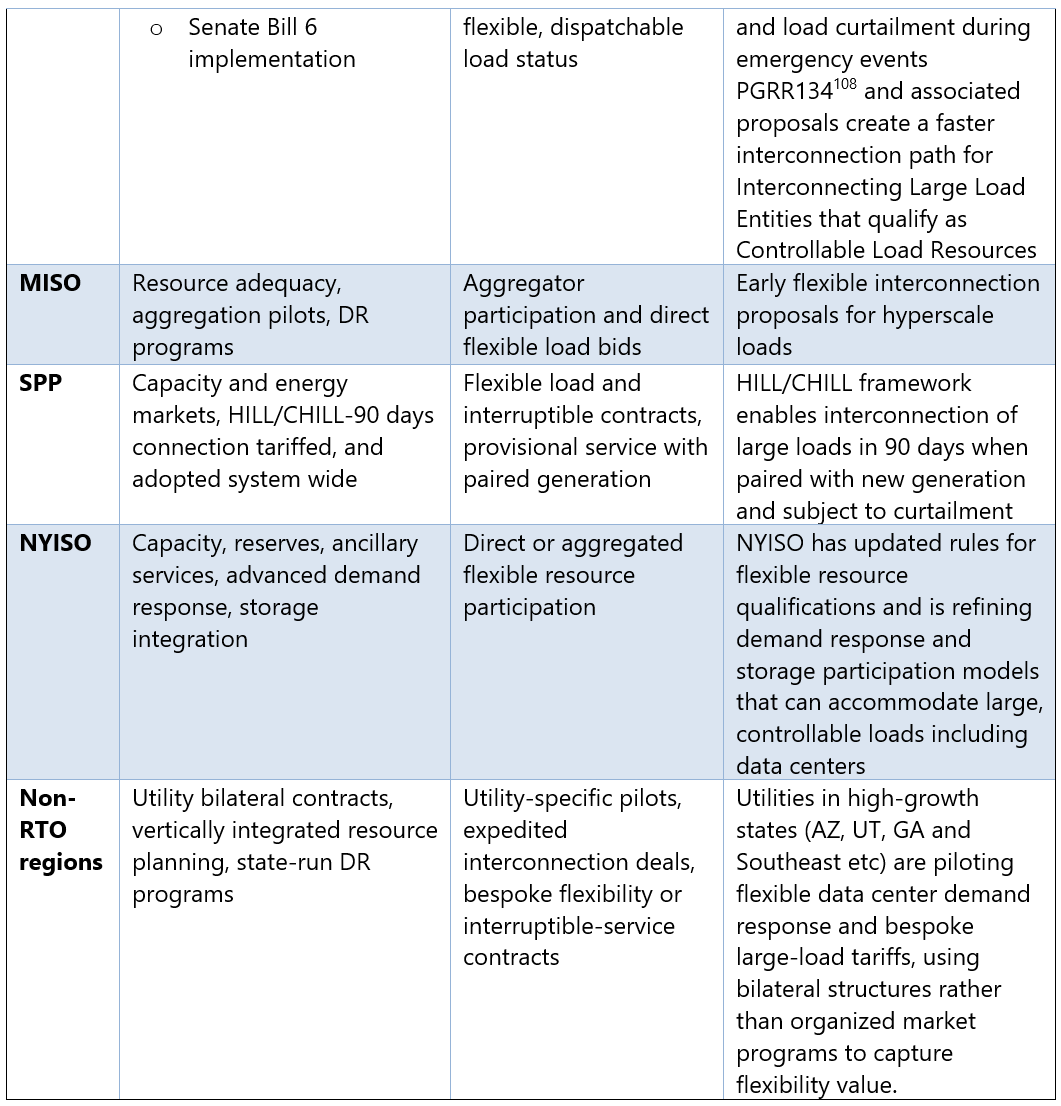

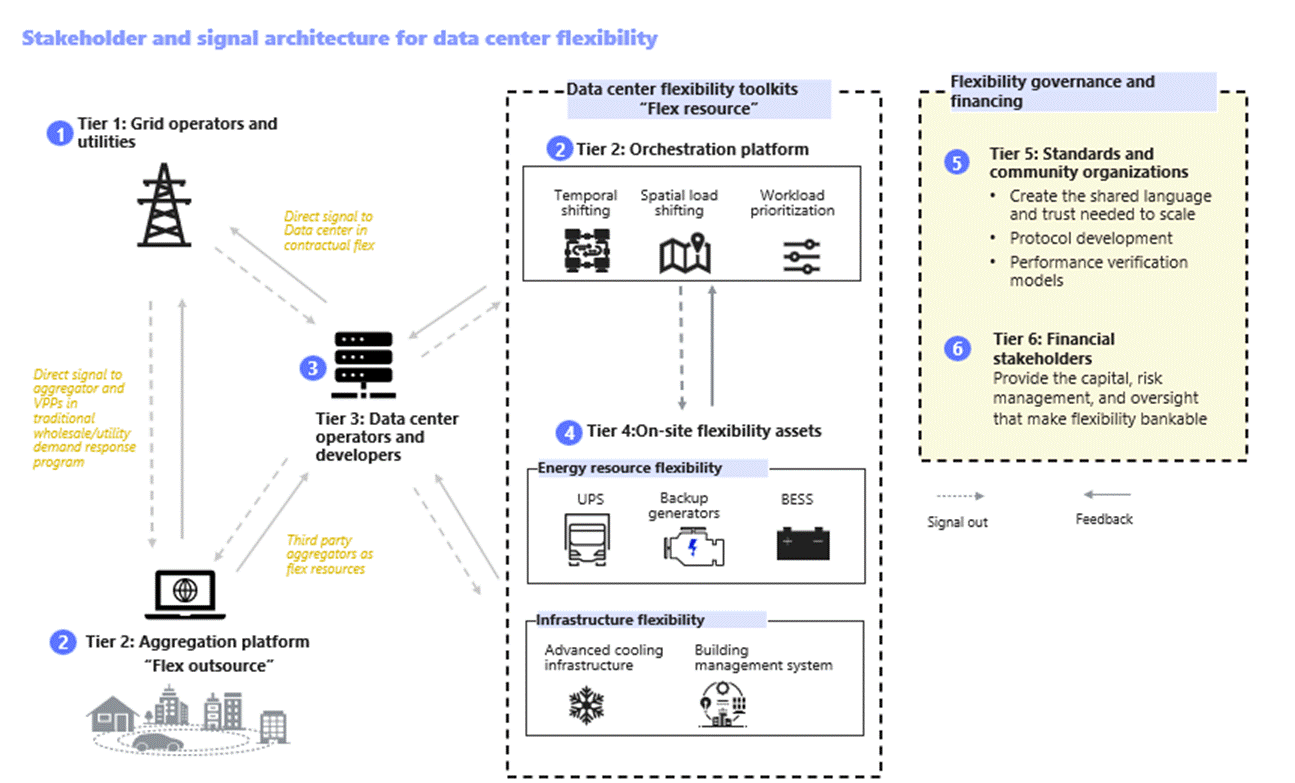

Solving the data center grid challenge isn’t something any single player can do alone. Chapter 1 introduced the six-tier stakeholder ecosystem that brings structure to this new landscape. Tier 1 includes grid operators and utilities, who shape system signals and reliability standards. Tier 2 covers aggregators and orchestration platforms that translate market signals into actionable dispatches. Tier 3 is data center operators and developers, the primary implementers of flexibility measures. Tier 4 includes on-site flexibility assets, such as BESS, generators, and advanced cooling, delivering the physical response. Tier 5 represents standards bodies and community organizations, which set interoperability protocols and best practices. Finally, Tier 6 includes financial stakeholders, investors, insurers, and auditors, whose requirements drive assurance and scale for this evolving ecosystem.

Because the data center flexibility is still in its pilot phase with no historical playbook to lean on, this section takes the next step: turning the six-tier model into a proposed coordination framework, “who does what, how they interact and why?”- Figure 20.

3.2.1 Tier 1: Grid operators and utilities

Grid operators and utilities sit at the center of the flexibility ecosystem. They see real-time system conditions, and they ultimately carry the responsibility for keeping the lights on. Their role is adding to the current “ensure reliability of supply” with “actively coordinate load,” a change that is important to scale flexibility.

They’re already moving in that direction. CAISO’s Emergency Load Reduction Program has shown it can coordinate more than 1.4 GW of demand during stress events.16 Grid operators and utilities set the pace, but without clear signals, nothing downstream can move. They issue the system signals, events, forecasts, flex product definitions, and price curves.

How grid operators-tier 1 coordinates with the rest of the ecosystem:

To aggregators (tier 2): In traditional utility and wholesale response programs, grid operators send signals directly to participating aggregators or VPPs. Aggregators translate these into portfolio-level instructions and determine how each site contributes to the event. Data centers enrolled under an aggregator follow these advanced signals and dispatch instructions through the aggregator’s platform.

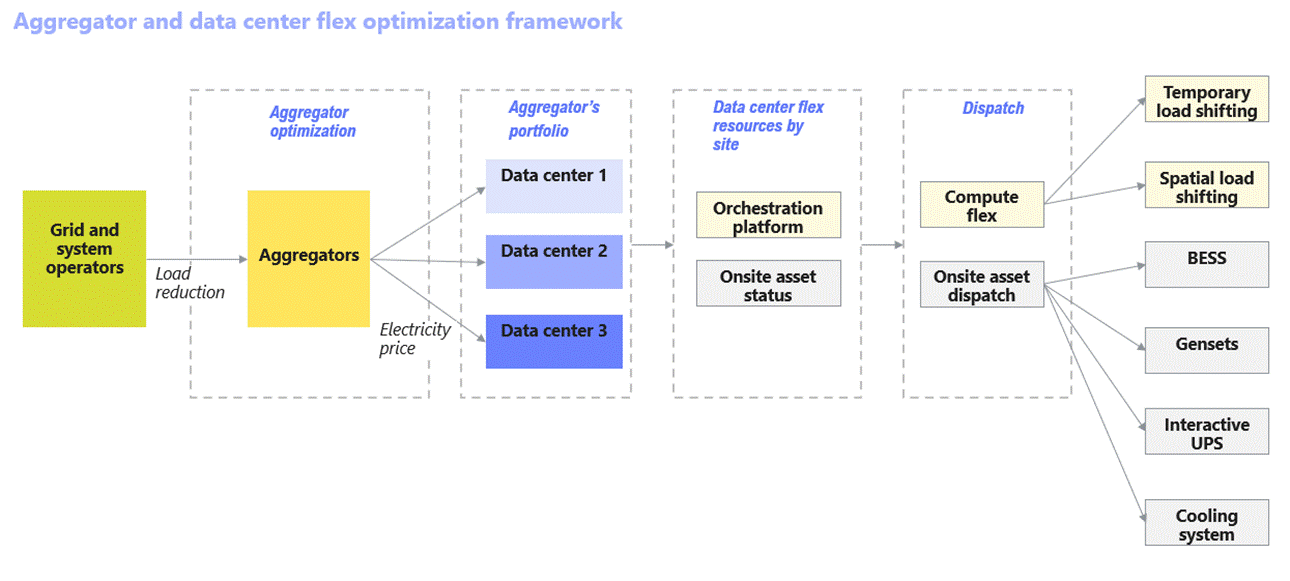

With data center operators and developers (tier 3): As integrated resource planning (IRP) and contractual flexibility evolve, grid operators begin treating data centers as grid assets. Grid operators share predictive forecasts, send direct dispatches, and define penalty and compensation terms so data centers can plan and commit capacity with confidence. They can either dispatch on-site assets or shift load via compute flexibility or utilize third-party aggregators for load reduction, aligning with the ongoing large load proposal in PJM.17

With on-site flexibility assets (tier 4): On-site assets remain owned and controlled by data centers. Dispatching decisions flow through orchestration platforms (tier 2) that adhere to participation rules set by the grid operator.

With standards organizations (tier 5): Grid operators provide real operational insight that shapes communication protocols, telemetry requirements, and verification models.

With financial stakeholders (tier 6): Utilities collect and validate event performance, feeding settlement, ESG reporting, and financial audits, turning flexibility into a bankable resource.

As the coordination mechanisms are defined, several capabilities at the grid operator and utility level must evolve for flexibility to scale:

Real-time and predictive signaling

Instead of calling for curtailment in the moment, system operators are beginning to send standardized signals or forecasts that include advance notice. The goal is to move from reactive emergency response “we need you immediately” to proactive system optimization “we’re 75% likely to need you Thursday at 5pm.” Advanced grid signal generation and predictive dispatches enables data centers to prepare through compute flexibility and energy storage pre-charging.18

In PG&E OpFlex pilot report19, PG&E’s system shows how utility can translate high-level system conditions into precise, site-level operating limits. PG&E brings together DERMS, ADMS, AMI, and location-specific forecasts, using machine learning to predict constraints over a 72-hour ahead. The system allocates headroom across enrolled sites and issues hourly schedules via IEEE 2030.5. Automatic “failsafes” revert customers to firm Planning Limits if unexpected situations arise, such as feeder overloads, loss of communications, or switching events, that gives operators confidence in non-firm capacity’s reliability. But the pilot’s report also makes one thing clear: DERMS is still far from an off-the-shelf solution for utilities. PG&E had to work closely with vendors to redesign, test, implement, and integrate operating functionality for even the minimum viable deployment of its four initial use cases: IEEE 2030.5 telemetry, flexible service connections, flexible generation connections, and the operationalization of its Distribution Investment Deferral Framework.

Settlement and compensation mechanisms

Today, there is no clear or consistent compensation mechanism for data center flexibility. For projects still under development, flexibility brings immediate benefits. Accelerating “speed to power” and avoiding years of delay can easily add up to billions of dollars in opportunity cost. But for the GWs of operating fleets, the incentives are far less obvious. Grid operators need to put forward an appealing business case. To make flexibility real, markets need transparent, performance-based compensation designed specifically for large loads and data centers. Clear price signals and predictable revenue streams create the visibility operators need to justify the operational trade-offs that flexibility requires.

Performance-based payout requires complex monitoring and performance verification systems to protect sensitive operational data. Automated settlement systems can reduce transaction costs while providing audit and regulatory compliance.

System planning integration

This is the biggest cultural shift for utilities: flexibility must be included in integrated system planning (IRP) and load studies, not an afterthought. Utilities have included demand response, VPPs, and other flexible resources such as BESS and generators, but integrating data center flexibility into IRPs is not as simple as adding another line item. Data center flexibility behaves nothing like the static assumptions IRPs were designed for. These resources introduce dynamic, probabilistic patterns, load that can shift, pause, or respond in seconds, requiring new modeling tools, new procurement structures, and continuous performance verification.

Core integration challenges

Utilities need new modeling capabilities that can represent flexible load, portfolio aggregation, and real-time system variability. Legacy IRP methods still treat load as passive and static, which obscures the true system value of demand-side coordination.

Best practice now calls for broader stakeholder engagement, transparent data sharing, and a more explicit linkage between resource adequacy, reliability, and decarbonization objectives. Flexibility sits at the intersection of all three, but most IRP frameworks are only beginning to account for it.

Integrating flexibility also requires an iterative planning and load study process. Instead of locking in long-term infrastructure decisions based on fixed forecasts, utilities must be able to revisit and recalibrate resource needs as new technologies emerge, demand spikes materialize, or policies shift.

Progress has been slow due to legacy planning cultures, regulatory oversight, and persistent information. Until modeling tools, procurement pathways, and performance verification systems are integrated, flexibility will remain underutilized.

3.2.2 Tier 2: Aggregators and orchestration platforms

Aggregator and orchestration platforms together are the translation layers that turns grid signals, through optimization and dispatch, into safe, reliable action across an entire fleet. Their interaction with the rest of the ecosystem includes:

Receive system signals from grid operators (tier 1) and data centers and turn them into action.

Decide and return event decisions and workload-tiering guidance to data center operators (tier 3), such as:

Signal interpretation: event type, urgency, expected duration, and required response.

Constraint valuation: SLA limits, site asset availability, current workload profiles, and risk factors

Prioritization and classification: workloads scheduling by flex tiers and prioritizes assets dispatch schedule

Issue asset-level dispatch (tier 3) or compute flexibility schedule and manage performance. If sites or grid conditions change, it reallocates assets, reschedules workloads, or shifts actions to different sites or schedules.

Share interoperability needs and participate in standards development with the standard organization and community (tier 5)

Deliver aggregated performance data for settlement, insurance, and audit to financial stakeholders (tier 6)

Although they operate side by side, aggregators and orchestration platforms play slightly different roles:

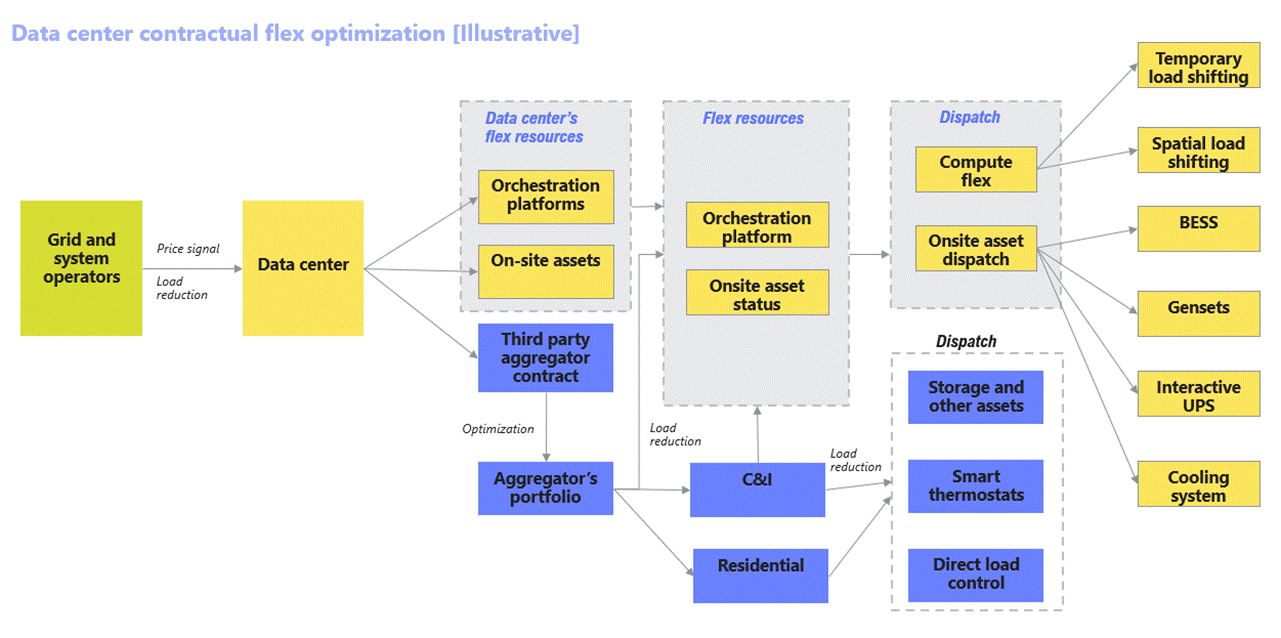

In traditional demand response programs, aggregators are the market-facing layer. They enroll data centers or other flexible loads, bundle them into a single resource, and handle customer contracts to bid into grid programs. Some key players, such as CPower, Voltus, or Edgecom Energy, manage participation and make sure portfolios show up when the grid calls. As data center flexibility ecosystem evolves, aggregators may also take on a new role as third-party resources, performing load reductions under contractual flexibility-Figure 21.

Orchestration platforms are the technical layers behind. These systems take grid signals, check what’s available at each site, and automatically dispatch compute flex or on-site assets without jeopardizing uptime. Highlighted players: FlexGen, Emerald AI, or Bamboo Energy.

Aggregators coordinate “who” participates and “what” is delivered; orchestration platforms provide the “how” by classifying and scheduling the response, dispatch, and status signal.

a) Aggregators

When data centers enroll in an aggregator’s portfolio, the aggregator are responsible for committed “flex load”. To do this, aggregators determine optimal price signals or incentives across participating data centers to deliver the objectives-Figure 22. Their interactions within data center flex ecosystem are as follows:

Below are highlighted functions that matter most for scaling data center flexibility:

Dispatch orchestration and automated control

Aggregators sit in the middle of everything. They take grid signals and turn them into actions that a data center can safely execute. That means translating a system-wide request into site-specific decisions that respect SLAs, asset limits, and real-time operating conditions.

Most of this happens through automated control. Advanced orchestration platforms using AI/ML evaluate constraints, prioritize workloads, and choose which assets respond, all in seconds. The emerging norm is “safe by default,” where the aggregator absorbs the complexity of market rules so the data center never compromises uptime or customer trust while participating.

Forecasting, scheduling, and risk management

A major part of the value aggregators bring is forward visibility. They forecast load, grid stress, and the likelihood of dispatch, then use that intelligence to shape schedules that balance flexibility opportunities with SLA protection.

They rely on probabilistic modeling, scenario analysis, and reserve planning to make those decisions. This allows data centers to participate with confidence while aggregators balance site-level risk and portfolio-level value.

Technical integration

Every data center looks different. They have different BMS, EMS, UPS, BESS, cooling systems, orchestration tools, and security layers. Aggregators and orchestrators are the ones who bridge all of that.

They integrate across sites, normalize data streams, and provide a single operational view for dispatch. Many also co-develop or adopt standards like OpenADR and IEEE 2030.5 to make onboarding simpler and reduce integration friction. This interoperability allows flexibility programs to scale instead of becoming one-off engineering exercises.

Cybersecurity, data protection, and trust building

Trust is a core requirement for flexibility. Aggregators must protect operator data and customer information. That means strong cybersecurity practices, encrypted data flows, strict access controls, and compliance with CPRA, GDPR, and NERC CIP standards.

This is essential for hyperscalers, financial partners, and regulators. Without clear protections and transparent audits, the flexibility ecosystem stalls. Strong cybersecurity is not only risk management. It is a prerequisite for participation.

Verification and reporting

Verified performance is the foundation for settlement, insurance, ESG reporting, and investor confidence. Aggregators gather dispatch status, validate delivery, and produce the reports that utilities, regulators, and financial stakeholders rely on.

Strong verification systems make flexibility predictable, auditable, and bankable, and they reduce the burden on operators by providing clean, trusted performance data.

b) Orchestration platforms

Major aggregator orchestration platforms

Orchestration platforms are technical engines inside both data centers and aggregator systems. Major advanced AI/ML platforms adopted by aggregators include AutoGrid Flex, Enel X DER Optimization, Schneider Electric EcoStruxure, Siemens DEOP, GE GridOS, EnergyHub Mercury, and Leap. These systems allow aggregators to control multiple sites and asset types at once, from data centers, C&I facilities, and residentials to DERs, BESS, and hybrid portfolios.

Data center centric orchestration platform

Within data centers, orchestration platforms ensure every action stays within operator’s-controlled risk limits, regulatory requirements, and SLAs. They classify and prioritize workloads into flex tiers and coordinate the full toolkit of site resources, acting as the Flexibility Management System (FMS). Vendors such as FlexGen, Emerald AI, and Bamboo Energy specialize in this dynamic, real-time control layer across diverse hardware environments. These systems are typically deployed directly inside data centers or across multi-site hyperscaler fleets, managing internal workloads, onsite assets, and flexibility operations.

3.2.3 Tier 3: Data center operators and developers

Data center operators are where flexibility either becomes real or doesn’t. They control the physical assets, draw the power, and own the customer relationship. Their role is shifting from “keep the site up” to “keep the site up and support the system.” How data center stakeholders-tier 3 coordinate with the rest of the ecosystem:

Send availability forecasts, constraints, and readiness to grid operators (tier 1)

Share priorities, operational envelopes, and asset commitments to the aggregator and orchestration platform (tier 2)

Trigger dispatch of UPS, BESS, generators, and cooling systems (tier 4) and pull real-time telemetry to ensure responses stay within SLA and safety envelopes.

Align operations and testing with emerging protocols, making their flexibility verifiable and interoperable (tier 5)

Provide certified event data, performance reports, and risk disclosures needed for settlement, ESG reporting, underwriting, and long-term financing (tier 6)

Data center operators-tier 3 is where flexibility becomes real, signals become operations, and operations become measured performance.

Design integration

New builds from major hyperscalers are no longer just “more capacity, faster.” They’re being designed with flexible assets. For example, Google’s data center incorporates thermal energy storage, enabling infrastructure flexibility without retrofit costs in Changhua County, Taiwan.20 Similarly, Microsoft’s global infrastructure development strategy incorporates standardized flexibility capabilities that provide spatial optimization. They do this across regions while maintaining service availability and performance standards.21 This design standardization reduces deployment costs while enabling portfolio-level coordination.

Operational coordination

Operators provide operational viability upon grid signals/forecasts. They provide real-time availability, constraints, and readiness forecasts to utilities and aggregators, building a shared view of when and how they can respond.

Historically, IT teams and facilities teams have run largely in parallel. Flexibility forces them to work as one. As data centers optimize their flexibility strategies, the operating team needs to rebalance AI training jobs, pre-charge storage, adjust cooling setpoints, and document service level performance. Cultural change management represents a critical challenge for organizations built around operational conservatism and risk avoidance. Implementing systematic flexibility requires developing comfort with dynamic operations while maintaining core uptime commitments.

Partnership model

Relationships with utilities are starting to look less like “supplier–customer” and more like joint planning as it should be. Standardized partnership agreements can cut months out of negotiation cycles, lay out roles during events, and reduce friction across multiple territories. This is especially important for hyperscalers who operate across many utility service areas and need consistency.

3.2.4 Tier 4: On-site flexibility assets

On-site assets, including energy resources: UPS, BESS, and generators and advanced cooling resources, interact up and down the stack.

Receive dispatch commands from data center operators (tier 3) and aggregators/orchestration platforms (tier 2)

Return real-time operating status and telemetry to operators (tier 3) and platforms (tier 2)

Aligning with test standards and certification requirements (tier 5)

Provide verified performance data used for insurance and risk assessment (tier 6)

Besides compute flexibility, on-site assets- tier 4 is the muscle, the point where curtailment, shifting, or support happens.

Most data centers already operate with backup generation, UPS batteries, BESS, and advanced cooling, but these systems have often worked in silos. The next step is linking them into coordinated energy systems that can respond dynamically to grid needs without affecting uptime.

Hybrid configurations now blend batteries for rapid response, generators for sustained capacity, and thermal systems for load shifting. When integrated through modern control platforms, these assets can deliver frequency regulation, spinning reserves, or targeted demand reduction in minutes rather than hours.

The real enabler is orchestration platforms. Smart power-management systems aggregate and verify performance, allowing on-site assets to participate in grid programs as reliable, dispatchable resources. When networked across multiple facilities, they effectively operate as virtual power plants (VPPs) built from equipment already in place.

3.2.5 Tier 5: Standards and community organizations

This is the scaling layer. Standards organizations, industry consortia, and technical working groups create a common language and trust. Tier 5 turns fragmentation into interoperability; it makes the ecosystem scalable. How standards and community organization-tier 5 coordinate with the rest of the ecosystem:

Gather practical experience, operational data, and technical needs from grid operators, data centers, and orchestration platforms (tier 1 to 3)

Provide communication protocols, verification methods, and certification frameworks to all stakeholders (tier 1 to 4)

Define the ESG, audit, and reporting standards that capital markets require for financial stakeholders (tier 6)

Protocol development

Tier 5 groups develop open standards and open-source frameworks that make flexibility deployable across different hardware, software, and operating models. Protocol development keeps the field competitive while ensuring systems can work together. And because these standards are shaped by input from across the ecosystem, not just a single vendor, they reflect what stakeholders truly need to scale.

Certification systems

PG&E OpFlex pilot highlights scaling challenges in tier 5. There were no available standards to certify equipment involved in flexibility. The current version of the Common Smart Inverter Profile (CSIP) for IEEE 2030.5 does not fully cover the required use cases, nor do early versions of UL 3141- those scoped for DER/inverter use cases. Even when enrolled devices comply with these standards, PG&E still requires utility-specific integration testing, commissioning, and validation to make sure dynamic limits, “failsafes”, and performance behave as committed. Vendors and aggregators have noted that these site-specific requirements create real bottlenecks to achieving “whole system” certification that can apply across sites and regions. It’s a clear signal that scaling flexibility will require modern protocols and test procedures designed specifically for large flexible loads and data centers.

Independent certification gives utilities confidence that data centers can perform during grid events, without requiring full internal visibility. Third parties provide trusted verification frameworks to assess flexibility capability and validate performance. Consistent, scalable certification, standardized metrics and test procedures are essential to deploy flexibility across different facility types, technologies, and flexibility use cases. With these standards in place, utilities gain confidence in verified performance, while data centers can credibly demonstrate their capabilities without undergoing duplicative, site-specific testing.

Shared learning and workforce

Organizations like the Open Compute Project give operators, utilities, vendors, and regulators a place to compare notes instead of reinventing the wheel in private.22Academic and research partnerships help build the next generation of tools, machine learning for dispatch optimization, predictive analytics for event planning, long-duration storage integration, and build the talent base the ecosystem will need. This matters as without common standards and shared proof, individual programs would never become a market.

3.2.6 Tier 6: Financial stakeholders

Financial stakeholders (tier 6) coordinate with all stakeholders (tier 1 to 5) to standardize financial disclosures, measure exposures, monitor performance and risk of on-site assets (tier 4), and support financing of data center’s flexibility investments (tier 3). They also cover and reinsure the technology and performance risks that accompany new technology, moving from early pilots into bankable, scalable solutions. Together, these mechanisms ensure that flexibility systems are technically sound, financially trusted, and scalable.

Due diligence integration: Financial requirements, covering technical, ESG, and operational disclosures, are built into projects from the start. This means assessing new technologies, business models, and counterparties so risk premiums, guarantees, and contingent capital are sized with clarity and confidence. This ensures flexibility initiatives are designed to meet investor expectations and bankability standards before they reach the financing stage.

Insurance and risk governance: Insurers coordinate closely with asset operators (Tier 4) and developers (Tier 3) to maintain continuous coverage and manage risks throughout the project lifecycle, such as: performance guarantees for flexibility assets, cyber-physical exposure from orchestration platforms, and revenue stability under performance-based tariffs. Regular reviews keep insurance terms aligned with real performance data and evolving grid participation models.

Auditing and compliance oversight: Auditors work with standards bodies (Tier 5) to verify certifications, emission reduction, and reports meet lender and investor needs. Their independent validation translates operational complexity into standardized, comparable metrics that fit within familiar project-finance and corporate-credit frameworks.

Financial covenant monitoring: Financing terms tie pricing and conditions to verified performance, ESG milestones, or resilience metrics, making flexibility a quantifiable part of financial risk. As technologies and business models mature, these covenants change from conservative pilot structures to more adaptive frameworks that realize reliability, scale, and cost-effective innovation.

3.3 Simplified system signal architecture

This section introduces an illustrative signal architecture that shows how flexibility actually operates in practice how signals move, how decisions are made, and how performance is verified across the ecosystem-Figure 23.

Step 1: Grid operators and utilities broadcast system needs, forecasts, and provide flex event, pricing signals, performance incentives, and payments. These signals flow either directly to data centers through contractual flexibility or to participating aggregators in traditional demand-response programs.

Step 2: Data centers send signals to aggregators/orchestration platforms, which classify and optimize workloads for computer flexibility and assess real-time status of on-site assets (such as: UPS, BESS, and Gensets, advanced cooling) to refine their decisions.

Step 3: Data center operators return visibility back to the grid: available flexible load, operational constraints, readiness windows, and expected response capabilities. This ensures utilities know what flexibility is on deck before events occur.

Step 4: Aggregator platforms and VPPs receive grid signals and translate them into concrete, site-level instructions. They allocate curtailment across portfolios.

Step 5: Data center operators accept these translated instructions and coordinate internal responses, choosing whether to use their own “toolkit” of (compute or onsite assets) or third-party aggregator flex resources. They update commitments as conditions evolve.

Step 6: On-site flexibility assets, UPS, BESS, generators, cooling, and building management system (BMS), execute the physical response and report status and performance data back up the chain.

All steps: Tiers 5 and 6 sit across the entire ecosystem, serving as ecosystem layers. They ensure that all signals and transactions, from curtailment dispatch to investment decision or insurance payout, follow protocols, standards, and verified outcomes.

The next chapter

We now have a clearer picture of the players, the signals, and the coordination it takes to make flexibility real. But understanding how the system works is only half the story.

Part 4 dives deeper into the economics, what flexibility is worth, who captures the value, and why the financial upside is far greater than the few hours of curtailment required. It’s where the business case comes together.

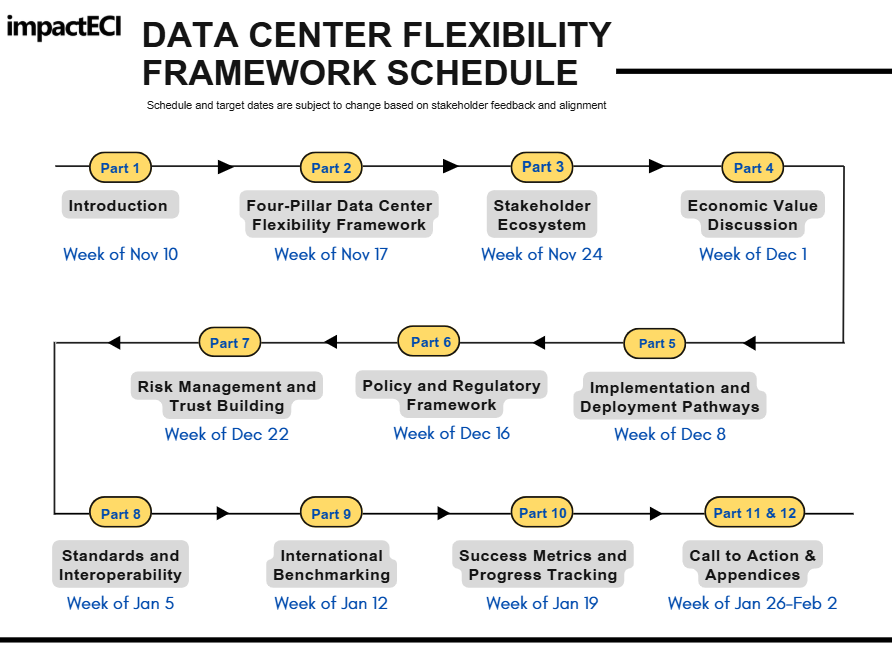

Schedule of Future Chapter Releases

https://www.ferc.gov/media/order-no-841

https://www.ferc.gov/ferc-order-no-2222-explainer-facilitating-participation-electricity-markets-distributed-energy

https://natlawreview.com/article/ferc-seeks-comments-november-14-2025-proposed-doe-reforms-accelerate#

https://www.energy.gov/sites/default/files/2025-10/403%20Large%20Loads%20Letter.pdf

https://www.mayerbrown.com/en/insights/publications/2025/11/ferc-large-load-interconnection-preliminary-rulemaking-key-takeaways-for-data-center-developers-other-large-load-projects-and-investors

https://www.energy.gov/articles/energy-department-launches-speed-power-initiative-accelerating-large-scale-grid

https://www.utilitydive.com/news/southwest-power-pool-spp-large-load-interconnection-policy/760357/

https://www.whitecase.com/insight-alert/grid-operators-propose-innovative-measures-manage-electricity-demand-data-centers

https://www.caiso.com/documents/2025-summer-loads-and-resources-assessment.pdf

https://www.nyiso.com/documents/20142/50614388/20250401%20ICAPWG%20CMSR%20v5%20(1).pdf/7c514b31-a2bd-e286-9b7f-f65c3c82e851

https://blog.yesenergy.com/yeblog/ercot-and-iso-ne-introducing-energy-market-co-optimization-in-2025

https://pubs.naruc.org/pub/2A466862-1866-DAAC-99FB-E054E1C9AB13?_gl=1*4xpa6f*_ga*MTA4MzM4MTQwLjE3NjE5MjI4ODk.*_ga_QLH1N3Q1NF*czE3NjE5MjI4ODgkbzEkZzAkdDE3NjE5MjI4ODgkajYwJGwwJGgw

https://www.datacenterdynamics.com/en/opinions/data-centers-role-in-providing-resilience-and-flexibility-to- power-grids/

https://digitalbankexpert.com/2025/10/designing-for-nines-that-match-real-banking-risk/

https://www.esig.energy/wp-content/uploads/2025/02/ESIG-Demand-Response-Wholesale-Markets-report-2025.pdf

https://www.caiso.com/documents/department-of-market-monitoring-update-mar-2025.pdf

https://www.pjm.com/-/media/DotCom/committees-groups/cifp-lla/2025/20251001/20251001-item-05d---joint-stakeholder-options---amazon-calpine-constellation-google-microsoft-talen.pdf

https://www.frontiersin.org/journals/energy-research/articles/10.3389/fenrg.2022.863292/full

https://www.cpuc.ca.gov/-/media/cpuc-website/divisions/energy-division/documents/rule21/smart-inverter-working-group/pge_opflex_report_2025.pdf

https://www.datacenterknowledge.com/hyperscalers/google-embraces-thermal-storage-in-taiwan

https://learn.microsoft.com/en-us/azure/well-architected/design-guides/regions-availability-zones

https://www.intel.com/content/www/us/en/customer-spotlight/stories/open-compute-project-customer-story.html