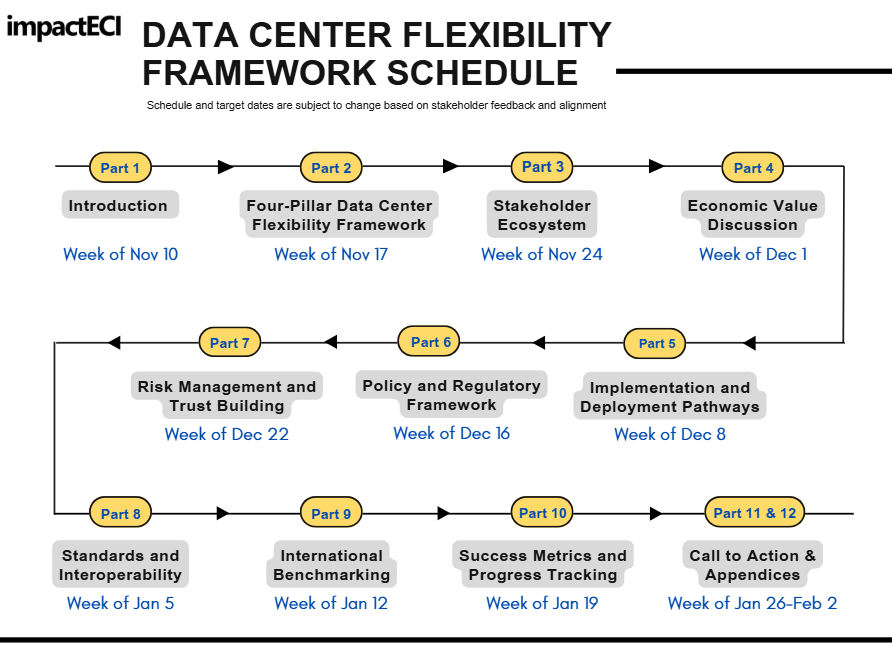

Data Center Flexibility: Economic Value Analysis

Chapter 4

The economics of data center flexibility goes beyond traditional demand response. When designed and executed well, flexibility can pull revenue forward, reduce utility costs, create new revenue streams for operators, and strengthen both grid efficiency and decarbonization. Utility decisions to seek flexibility often depend on how they earn revenue and plan capital investments. But regulators are increasingly requiring large loads to come forward with flexible demand so utilities can optimize capital allocation, prevent stranded assets, and keep costs manageable for ratepayers.

Flexibility also delivers meaningful economic benefits to local communities. Conditional, flexible interconnections pull construction activity, infrastructure investment, tax revenue, and job creation forward by years. They also accelerate the digital backbone that supports AI, manufacturing, logistics, and defense. These broader gains rarely show up in traditional planning, which tends to focus on short-term energy transactions rather than the full system value of flexibility. And this doesn’t include the additional economic and local health benefits from reducing on-site generator run-time, which often carries harmful emissions.

A complete framework needs to reflect this wider impact. It should capture direct value from avoided infrastructure and carbon emissions, create benefit-sharing structures that align utilities and customers, and recognize the societal value created through faster, cleaner economic development.

4.1 Value creation for utilities, data centers, and communities

4.1.1 Value creation for utilities

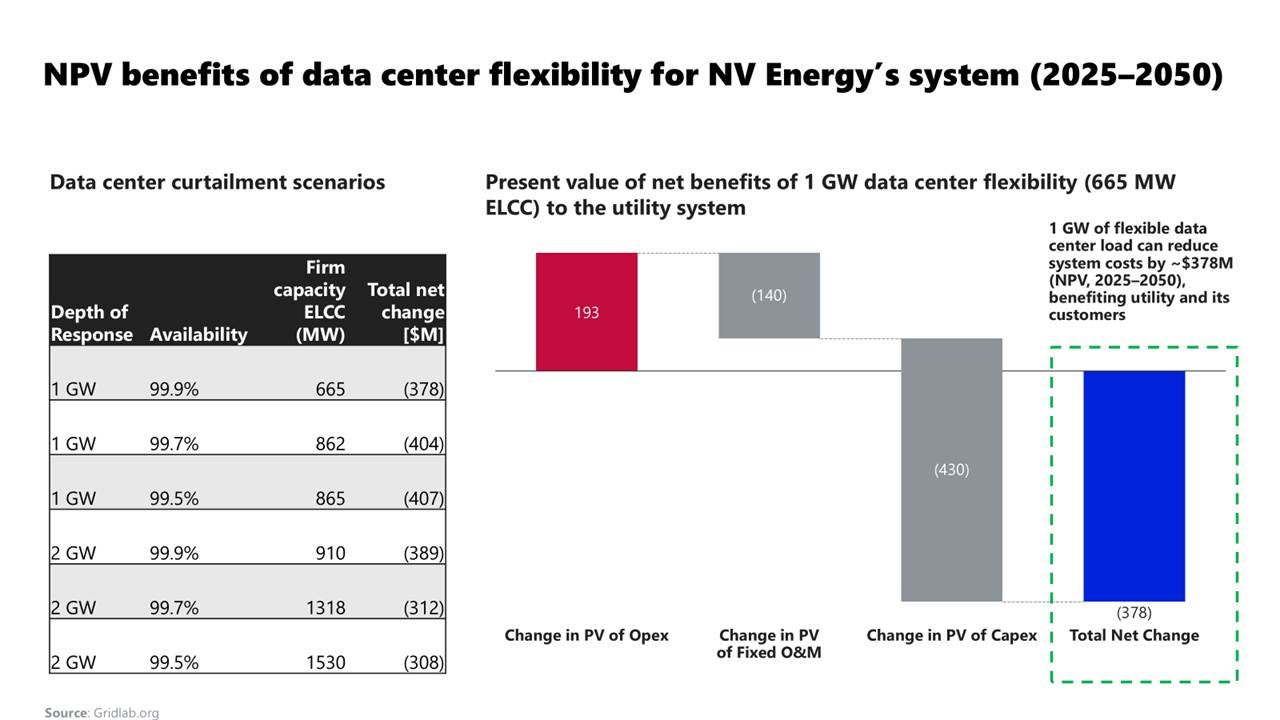

a) Transmission and capacity deferral

The biggest advantage for utilities is being able to put off or prevent new transmission and peaking generation, which can be very expensive. Standard planning aims to accommodate unusual peak demands, so even modest flexibility can save significant capital costs. In constrained regions such as Northern Virginia or the Northeast, the per-MW value of deferral is particularly high. As noted earlier, GridLab-NV Energy‘s system review research reveals the possible scope: about $300-400 M+ in NPV from 1-2 GW of curtailment from avoiding capacity additions and upgrading transmission infrastructure, equal to 0.6–1.5 GW of firm capacity (67–87% ELCC)-Figure 24. This analysis confirms how targeted flexibility can serve as a “non-wires alternative” that reduces the need for new infrastructure while maintaining reliability. These advantages grow across markets as the number of participants increases.

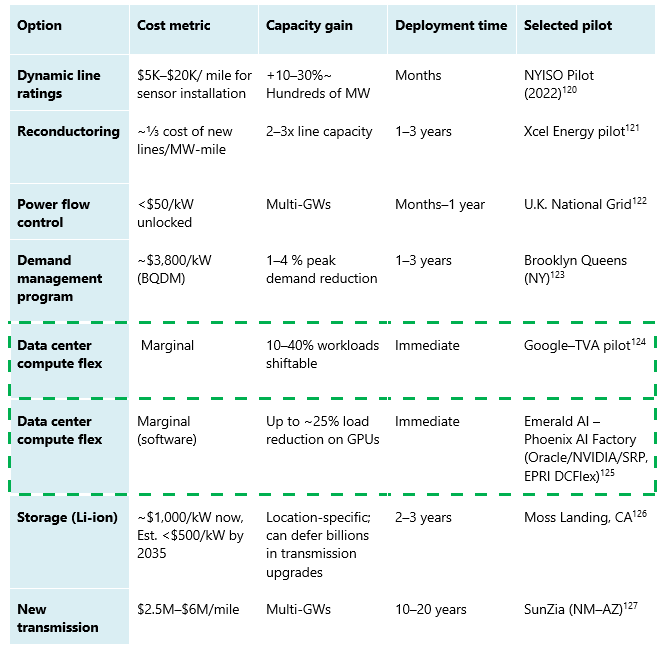

b) Comparative cost of grid capacity options

The Information Technology and Innovation Foundation‘s report compared the costs of grid enhancing technologies (GETs) with the costs of new transmission-Table 1. Dynamic line ratings, advanced reconductoring, modular power flow controls, and topology optimization software can unlock GWs of the new headroom. These tools can often be deployed within months to a few years. Non-wires alternatives show similar value. Programs, such as the Brooklyn Queens Demand Management project, can reduce 1-4% peak load at a much higher cost $3,800/kW ~ $3.8B/GW. While utilities generally pass GET’s investments to rate payers, most studies find that the congestion and curtailment savings outweigh capital costs and lower total system costs relative to a build-new-lines approach.

Within this landscape, data center flexibility stands out, relieving grid stress at the source of problem. Compared to GETs, their costs are marginal, and implementation timelines are much shorter. Unlike GETs’ grid hardware, which utilities can finance and recover through the rate base, much of the flexibility investment sits BTMs. As mentioned previously, this shifts the burden to data center operators and developers and raises questions about cost sharing, incentives, and the design of performance-based programs.

Additional Sources: 120, 121, 122, 123, 124, 125, 126, 127

c) Improved operations, lower exposure, and reliability benefits

As mentioned previously, flexibility changes the demand curve. Instead of forcing utilities to chase data center peaks with new peaking generation assets and emergency actions, flexibility helps manage around those peaks using existing unused assets. When there are high-stress times, flexible data centers can trim/shift their usage, easing loading on contested substations and transmission corridors, giving grid operators more headroom to ride through contingencies. This translates into fewer hours with extreme net load, smoother ramps, and less frequent resort to the most expensive resources on the system.

Those operational benefits lower risk profiles for utilities and their regulators. As flexibility reduces the number of system bumps into thermal and voltage limits, utilities can defer/resize projects that’d be justified solely by a handful of worst-case hours. Instead, capital can go towards network reinforcements and cleaner resources, which can result in higher utilization and broader customer benefits (better tariffs and rate structures). By avoiding and deferring greenfield projects, it also reduces the likelihood of encountering hurdles with permitting, litigation, community opposition, and construction cost overruns. In addition, utilities can consider data center partners that not only keep the existing infrastructure within safe limits but also reduce the need for challenging last minute emergency demand response.

d) Steady capacity value

Earlier sections showed how small percentages of flexible operation can unlock meaningful grid capacity. Capacity planning frameworks make this explicit through Effective Load Carrying Capability (ELCC). Just as planners estimate how much firm capacity a new storage or gas unit contributes to meeting peak demand, they can quantify the contribution from reliably controllable load.

A portfolio of data centers that consistently reduces demand during the system’s highest-risk hours functions like a “virtual resource” that lowers net peak and, in turn, reduces the amount of new firm capacity needed to meet reliability targets. Under reasonable assumptions about duration, call frequency, and performance, studies show that 1GW of multi-hour flexibility can offset roughly 2/3 to more than 4/5 of that amount in new firm capacity additions.

In markets with capacity auctions, this accredited ELCC shows up as a “bid-able” resource that qualifies and gets paid, just like a virtual power plant. In vertically integrated regions, the same value is captured in resource plans and reserve studies. In both cases, well‑designed flexibility programs let planners rely on demand‑side reductions instead of building as much new supply‑side capacity.

e) Carbon emissions

Flexibility can cut emissions by moving load to cleaner hours and avoiding carbon-intensive generation. It also reduces pressure to build new peaking/ natural gas/coal capacity, strengthening reliability and letting the grid make better use of existing renewable resources.

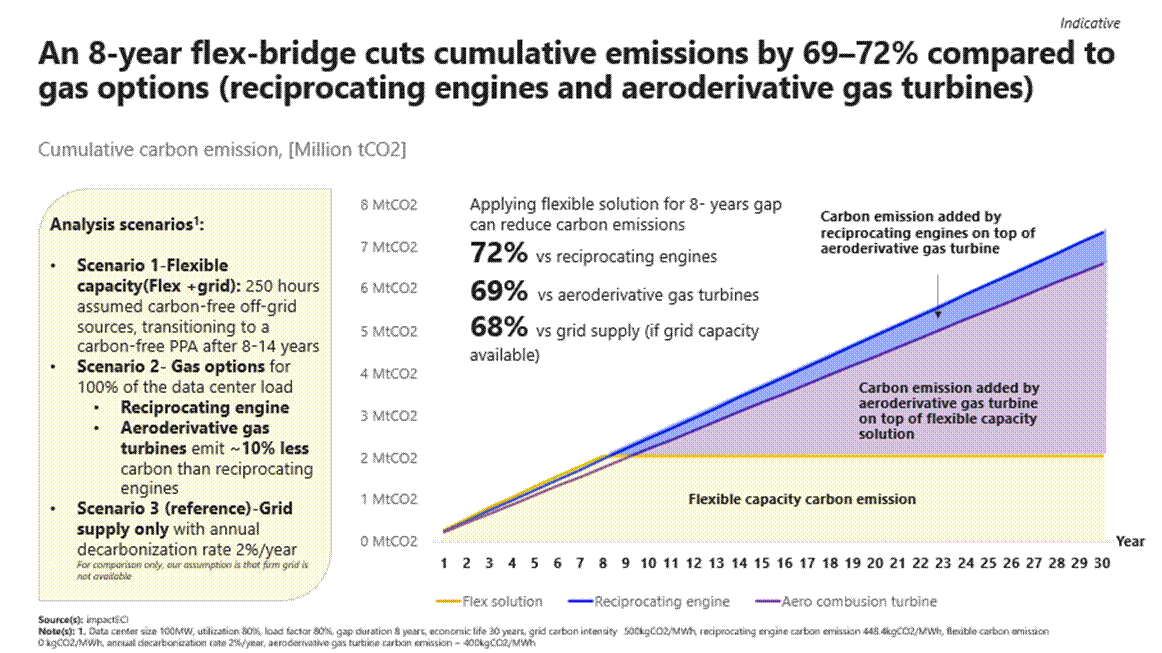

In our recent article “ The Bridge is Flexibility, Not Natural Gas”, impactECI built a simplified model, comparing the emissions impact of an eight-year flexibility 100 MW data center in 3 operating scenarios.

The modeled cases included:

Flexibility (flex + grid): 250 hours of annual operation of carbon-free off-grid capacity for eight years before transitioning to a carbon-free PPA

Gas option: reciprocating engines emitting roughly 448 kgCO2/MWh and aeroderivative turbines emitting about 400 kgCO2/MWh

Grid only: grid’s carbon intensity 500 kgCO2/MWh, assuming a 2% annual decarbonization rate, included as a baseline but still dependent on timely interconnection

The eight-year flexibility bridge outperforms both reciprocating engines and aeroderivative turbines, cutting cumulative emissions by roughly 69 to 72%. It also performs better than a standard grid-supply pathway, reducing carbon intensity by about 68% while preserving reliability and avoiding new fossil investments-Figure 25.

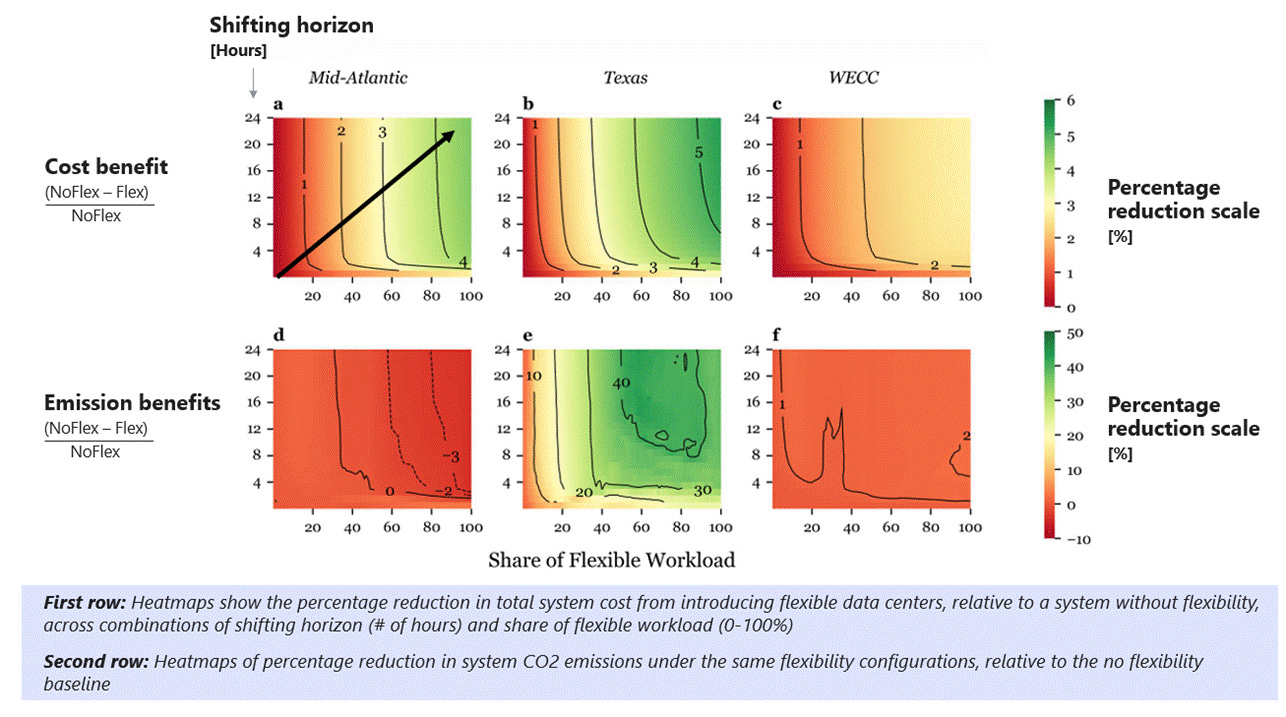

The broader system impacts vary by region. Massachusetts Institute of Technology‘s 2025 study “Flexible Data Centers and the Grid” finds that flexibility always lowers system costs, but the emissions impacts depend heavily on the grid generation mix and its carbon intensity score during shifted hours. The analysis highlights three regions-Figure 26:

Texas (ERCOT) sees both major cost savings and emissions reductions (greener heatmap). The grid has a high and growing share of wind and solar, supported by flexible gas generation. When data center load shifts into hours with surplus renewables, it avoids expensive peakers and reduces total system emissions. According to eGRID 2023, Texas‘s carbon intensity is roughly 330kgCO2/MWh for total output and about 564 kgCO₂/MWh for non-baseload. These intensities continue to decline due to increasing renewables penetration.

The Mid-Atlantic sees strong cost benefits but probably experiences an increase in emissions. The region has lower renewable penetration and now relies more on coal and gas. When load shifts into cheaper hours, it can pull from fossil generation on the margin. This boosts utilization of carbon-intensive baseload units even as overall system costs fall. Mid-Atlantic’s carbon intensity reaches up to 440 kgCO2/MWh for total output, with non-baseload emission in the 500-800 kgCO₂/MWh range.

Western Interconnect (WECC) shows modest cost and emissions benefits. Hydro and renewables already make up a high share of supply, and system peaks are less constrained. With cleaner marginal generation and fewer high-cost peak hours, there is less room for flexibility to reshape emissions outcomes. WECC subregions carbon intensity reaches up to 470 kgCO₂/MWh for total output, with non‑baseload in the 430–730 kgCO₂/MWh range.

4.1.2 Value creation for data centers

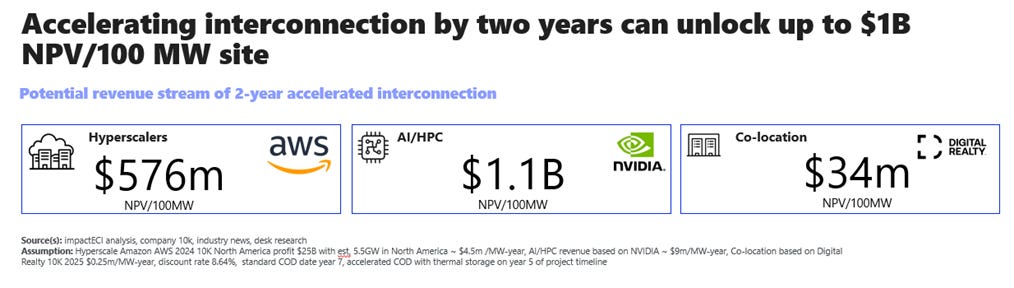

a) Accelerate grid connection

Utilities are starting to prioritize connecting adaptable facilities, resulting in faster energization and revenue generation-Figure 27. The shift is already visible in federal guidance (U.S. Department of Energy (DOE) and Federal Energy Regulatory Commission) and in utility practices. Flexible projects can jump ahead of the queue when they can carry built-in limits on their peak impact and come with clear operating rules during grid stress.

For developers, earlier grid connection directly reduces cost and risk. A shorter gap between construction and energization shrinks the period when land, equipment, and financing costs accumulate without revenue. Faster connection and earlier revenue strengthen project economics and lower the opportunity cost that customer contracts or business cases erode in the queue. Flexibility also replaces uncertainty around power’s availability with a predictable ramp of conditional service (contractual flexibility). That makes it much easier to phase build-out, leasing, and hiring.

Data center operators trade a predictable amount of operational risk for a major risk reduction in delay, redesign risk, lost revenue, and stranded assets. For many developers, flexibility is no longer optional, and it is becoming the key enabler for accelerating grid connection and unlocking revenue sooner.

b) New grid services revenue

Flexible data centers can monetize their capabilities by participating in demand-response, capacity, and ancillary service markets, earning payments for curtailment, spinning reserve, or frequency regulation. Even without entering into a contractual flexibility with utilities, data centers can still earn value by participating in traditional demand-response (DR) programs or by integrating into Aggregators/VPPs that aggregate flexible load across their own fleets.

In PJM Interconnection, currently one of the most profitable capacity programs, recent auctions 2025/2026 have cleared at around $98-170K/MW-year or $269–466/MW-day, from just $29/MW-day in 2024/2025 auction. At these levels, aggregators can pay out large loads in the $70k–100k+/MW-year while maintaining a margin. GridBeyond recently offered a 10-year guaranteed payment of $73k/MW-year, with a 100% revenue share. The program provides predictable, hedged income even when PJM capacity prices fall, protecting participants from price volatility.

Capacity is only one component of the revenue stack. Additional compensation from emergency events and from ancillary services (such as frequency regulation) can further enhance total value. However, total economic value depends on local grid conditions, load, and site-specific performance. For data center flexibility, the combined revenue streams from grid services can be meaningful, turning what has historically been a pure cost center into a recurring performance-based revenue opportunity.

c) Operational and sustainability gains

Load-shifting and optimization reduce electricity bills while enabling higher use of clean power. For operators under pressure to meet corporate decarbonization goals, flexibility offers a tangible path to improve carbon intensity without sacrificing uptime.

Portfolio-level workload orchestration: Flexibility lets operators view their entire global fleet as a single virtual asset, shifting non-urgent or AI/ML workloads between regions based on real-time prices, marginal emissions, and local grid stress.

Regulatory and disclosure alignment: Flexibility also aligns data center operations with emerging regulatory frameworks, such as hourly carbon accounting, grid‑interactive efficient buildings (GEB) requirements, and SEC climate disclosures. There is also growing public support for transparent emissions reporting, as stakeholders are looking for real-world carbon reduction, not just REC purchases. Flexibility makes “24/7 carbon-free energy” or similar claims more credible with real-world impacts.

Resilience under constrained grids: Four flexibility pillars provide data centers with more options during grid stress, avoiding overbuilding and reliance on backup power. This reduces fuel volatility risk, lowers local pollutant exposure, and cuts O&M on gensets. In markets with aging infrastructure or frequent reliability challenges, flexibility becomes a resilience enhancement rather than a compromise.

d) Competitive advantage

In constrained markets, demonstrating flexibility has become a competitive advantage. For example, facilities that can manage load receive favorable regulatory consideration (preferential rates and targeted incentives).

Enabling access to grid-constrained locations: Flexibility becomes a key enabler, unlocking access to strategic regions where power constraints would otherwise block new development.

Differentiated value proposition for tenants: For colocation operators, flexibility creates a new category of value for tenants. Tenants can participate in demand-flex programs and dynamic tariffs without building their own telemetry, controls, or regulatory compliance processes. Operators can offer tenants verifiable lower-carbon operations, flexible pricing tied to grid participation, or “green tier” SLAs that resonate with ESG-conscious customers. Flexibility can be a differentiated advantage in a crowded market.

Faster power, faster core growth: When projects come online sooner, operators can begin selling more of their core products, such as cloud services, AI capacity, colocation space, or SaaS, years ahead of competitors, who are still waiting in the queue. In the AI/HPC market, where speed to market matters, this provides a pronounced advantage in gaining market shares and winning high value long-term contracts.

e) Goodwill and corporate reputation

It’s no secret that data centers are often blamed for driving expensive grid upgrades that end up on customer bills. Historically, utilities have had to build extra transmission lines and peaking plants to serve large, inflexible loads that run 24/7. As the market evolves, utilities and system operators are setting rules that require data center developers to fund network upgrades they trigger, rather than spreading those costs across other ratepayers.

Flexibility changes that story: data centers can help the grid, not just strain it. By adjusting during peak periods, they reduce the need for costly new infrastructure and help keep power affordable for everyone. For utilities, flexible customers are easier to connect with and plan around. For regulators, they demonstrate that digital growth can align with public interest. And for communities, they prove that data centers can coexist with local priorities instead of driving costs and pollution.

At the same time, flexibility builds trust and reputation. Operators who take part in these programs show leadership in sustainability and responsibility. They earn credibility with utilities, regulators, and investors and are seen not as burdens on the grid but as contributors to innovation and resilience. In an industry under close public and investor scrutiny, flexibility creates business value and a positive corporate reputation.

4.1.3 Value creation for ratepayers and society

a) More stable and affordable rate structures

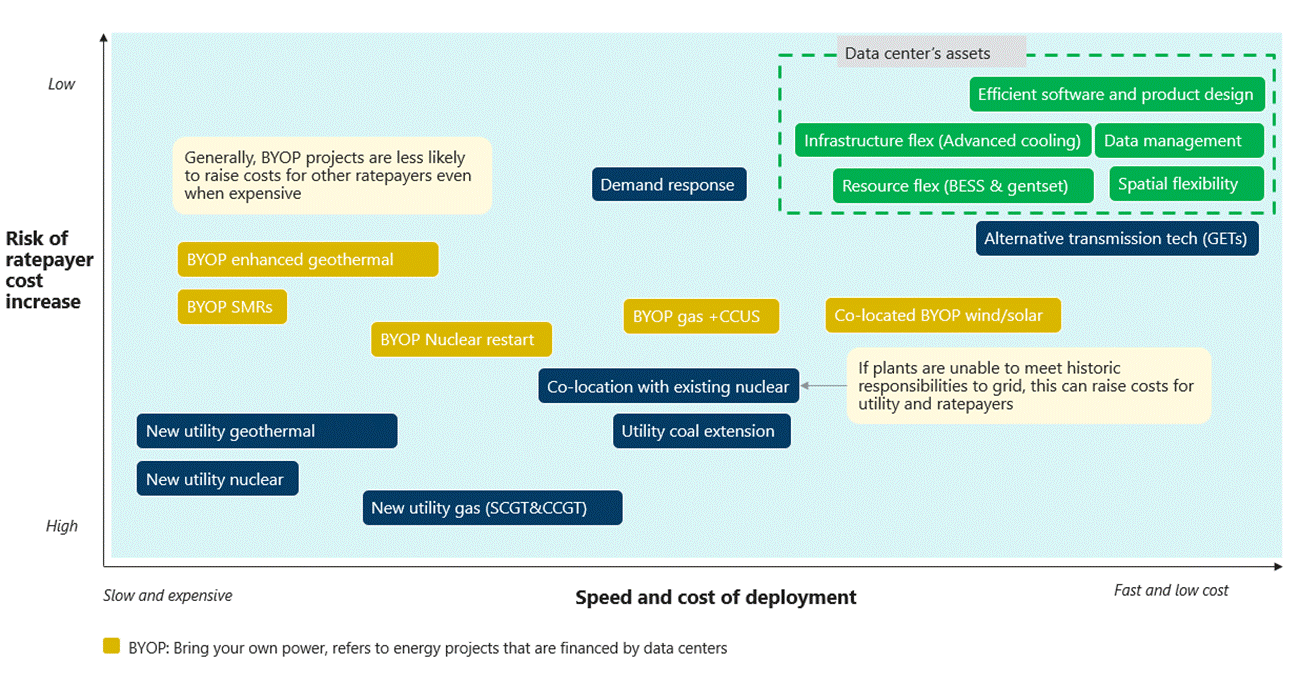

A RMI analysis compared the cost and speed of deploying various data center solutions against the risk of increased cost for ratepayers. Demand-response programs can be deployed relatively quickly and with minimal impact on customer bills. By contrast, options like new utility-owned gas, nuclear, or long-lead transmission sit in the “slow, expensive, high-risk” category, where overruns and under-utilization are most likely to be passed down to customer bills-Figure 28.

Flexibility, including infrastructure, spatial (compute), and resource flexibility, sits in the upper corner of the RMI framework: “fast to deploy, low cost, and low impact on ratepayers”. Data centers can invest in software, controls, and operational integration for spatial flex. They can also utilize backup gensets and battery storage BESS for resource flex. Ratepayers see most of the upside: fewer peaking plants, smaller or deferred transmission builds, and lower congestion costs, rather than large new assets added to bills.

Emerging co-location and “bring your own power” (BYOP) options, such as gas, nuclear plants, nuclear restarts, and enhanced geothermal plants, sit at the middle of the spectrum. These BYOP investments are exposed to volatile fuel, supply chain bottlenecks, and increasing construction costs, and they face a high risk of becoming stranded assets. Generally, these projects, financed by data centers, are less likely to raise costs to other rate payers, even when expensive. However, when utilities own these assets, that risk flows directly to ratepayers through demand charges and fixed charges, as shown in new utility geothermal and new utility nuclear options (in lower left corner).

b) Community and ecological advantages

As brought up previously, flexibility allows utilities and operators to utilize cleaner resources and reduce reliance on baseload fossil generation, peakers, and diesel backup during grid stress. Modeling studies reveal that potential system-wideThe economics of data center flexibility goes beyond traditional demand response. When designed and executed well, flexibility can pull revenue forward, reduce utility costs, create new revenue streams for operators, and strengthen both grid efficiency and decarbonization. Utility decisions to seek flexibility often depend on how they earn revenue and plan capital investments. But regulators are increasingly requiring large loads to come forward with flexible demand so utilities can optimize capital allocation, prevent stranded assets, and keep costs manageable for ratepayers.

The environmental benefits and local health impacts are significant. For example, a 3.5 GW gas plant could result in $31M/year in health costs from emitted PM₂.₅, and more than $625M cumulatively by 2040. By shifting both existing data centers and new builds toward flexible, grid-interactive operations, local communities can capture the economic upside while avoiding potential air-quality and public-health burdens.

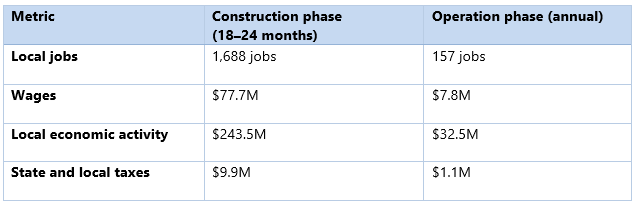

Faster deployment spurs construction jobs, expands tax bases, and strengthens local supply chains. These effects expand as digital and energy infrastructure co-develop. According to the US. Chamber of Commerce CTEC, a typical 100 MW facility, creates meaningful local impact from day one-Table 2. During construction, it employs roughly 1,600 local workers over 18-24 months, paying out about $78M in wages and supporting over $240M in local economic activity. Once operational, that same facility sustains around 150 permanent jobs, adds $7.8M in annual wages, and contributes about $32M each year to the surrounding economy. It also generates close to $10M in state and local tax revenue during construction and another $1M annually, helping fund schools, services, and public amenities.4.2 Cost estimates, risks, and the first-mover’s burden

The benefits extend well beyond direct jobs. Many operators invest in infrastructure upgrades, roads, power lines, water systems, and broadband that serve the wider community. Partnerships with schools and technical colleges create training and STEM programs, building a stronger local talent pipeline for future tech and energy roles.

4.2 Cost estimates, risks, and the first-mover’s burden

Flexibility creates meaningful value, but its implementation comes with real costs and risks. Early movers often bear the risks, opportunity costs, and expense of integration, controls, and compliance when the ecosystem is building standards and business models. A clear understanding of these cost drivers and risk profiles is critical for designing programs and tariffs that keep the value proposition compelling for both utilities and data center operators.

Today’s pilots still rely on legacy DER and interconnection standards such as Rule 21, CSIP and IEEE 2030.5, UL 1741, and IEEE 1547, but there is not yet a mature standard for data center-scale flexibility controllers. UL 3141 for Power Control Systems (PCS) is evolving as the most likely framework for multiple DERs and controllable loads. Yet, most utility tariffs and interconnection procedures refer to it as a principle rather than as a firm requirement. As a result, early movers negotiate site by site with utilities and RTOs/ISOs, carrying engineering and legal risks.

Figure 29 shows how California Public Utilities Commission’s OpFlex pilots (Pacific Gas and Electric Company, Southern California Edison (SCE), and San Diego Gas & Electric) are laying the groundwork for contractual flexibility. The early opt-in assets are storage (6 MW) and EV charging (4.5 MW), yet the real value is in the learning curve. The signaling, control, and standards work taking place now will set the template for data center flexibility as the programs grow.

a) Site communications and controls costs

Because the technology is still new and solutions are highly customized, the cost for large customers to implement the required communications and control systems remains relatively high, often in the range of $20k-$50+k per site, according to PG&E OpFlex pilot report. Some participants estimate costs rise even more with complex integrations involving cloud applications, California ISO rigs, local site controllers, and DER devices. In addition, data centers may incur even higher costs due to operational and system complexity or the internal application updates needed to support “flexible” operations.

b) Stranded asset and retrofit risk

Because the system‑level standard is still evolving, existing data center fleets and early adopters risk building control architectures that do not match the eventual “official” PCS pattern. If future tariffs, codes, or certifications set specific UL‑3141‑listed PCS architectures, current existing and pilot sites may face:

Retrofit costs to replace or re-certify existing controllers and switchgear integration.

Stranded software and integration work, if their current signaling and control schemes do not satisfy future test protocols or verification rules.

Control layers monitor load shedding, backup generation, storage, and sometimes customer workloads, so swapping them is relatively expensive and operationally risky for existing data center fleets.

c) Bespoke, site-specific compliance

Flexibility pilots show that we do not yet have a standard plug-and-play compliance for data center flexibility. Compliance is still built one site at a time. Each facility must work with the utility to interpret signals, integrate controls, set up telemetry, and document protections for SLAs and safety. Nothing is standardized, and no two implementations look the same.

Because there is no certified, ready-made control stack for data center flexibility, operators are relying on bespoke engineering and custom integrations to participate. This makes every project slower, more expensive, and harder to replicate. It also shifts the burden onto early movers who must solve problems that standards and protocols have not yet caught up to.

Until there is a consistent architecture that utilities and data centers can both rely on, participation will keep depending on one-off engineering instead of repeatable, scalable solutions.

d) Program design, tariff, and regulatory risk

Early participants also face program and tariff risk. Pilots and emerging hourly flexibility programs are still evolving as utilities refine performance windows, baselines, and settlement rules. If a data center invests heavily in a specific control approach and tariffs later shift, some of that investment may lose value or need to be reworked.

Regulatory risk flows in both directions. Utilities that lean heavily on customer-side flexibility without clear, performance-based frameworks may face skepticism from commissions about reliability or cost allocation. Predictable multi-year program design, coupled with rigorous measurement and verification, is essential so that early investments are bankable rather than speculative.

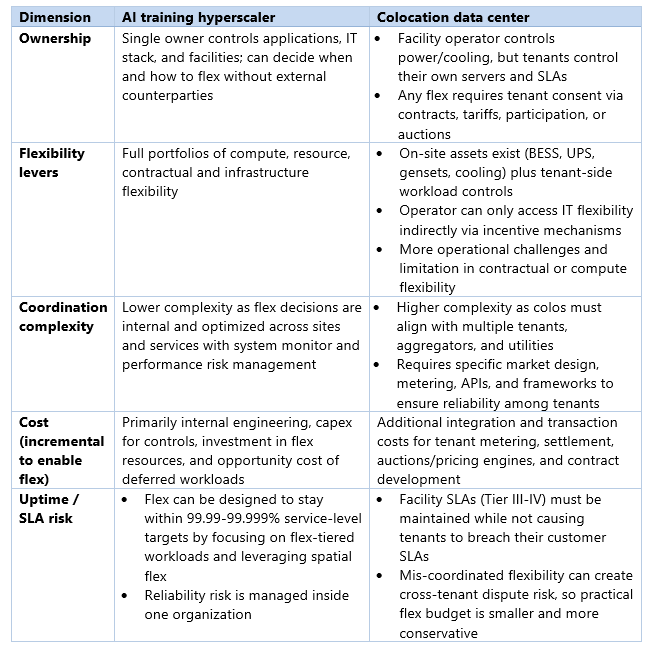

e) Some additional challenges for colocation data centers

Colocation data centers (colos) face the same challenges as hyperscalers, plus an added layer of commercial and contractual complexity-Table 3.

Enablement capex and integration cost: Colos must invest in advanced metering, sub-tenant monitoring, control systems, necessary upgrades, and integration platforms to safely participate in flexibility programs. These costs sit on top of a standard Tier III/Tier IV build. They also incur software and process costs for running pricing or auction mechanisms, like ColoEDR, plus the settlement and tenant-billing systems those programs require. Emerging “tenant-aware schedulers” add another layer by integrating OpenADR or ISO signals, carbon data, and workload orchestration across private cloud, colo, and public cloud environments, increasing integration and data-engineering requirements.

Transaction and governance costs: Because incentives are split between facility operators and tenants, colos must build and maintain contracts, tariffs, and marketplace or auction rules that both meet regulatory requirements and appeal to tenants. That means deciding which workloads can flex, how payments are shared, and how to resolve performance or SLA issues. With no real playbook to follow and a wide mix of tenant needs, this quickly becomes a heavy legal, administrative, and operational lift.

The next chapter

Across the board, the potential economic benefits are enormous, but the value is realized only when flexibility can be scaled. The benefits outlined in this chapter, from avoided transmission and peaking investments to earlier data center revenue and stronger local economic activity, depend on turning flexibility from bespoke pilots to scalable projects.

The next chapter “Part 5: Implementation and deployment pathway” takes that next step. It looks at how utilities, hyperscalers, and regulators can shift from today’s proof of concepts to the first and second deployments and ultimately to a system where flexible, grid interactive data centers are the norm.